Another contribution is a demonstration that transfer learning is effective in mitigating overfitting. The recipe is: pretrain on a large image database and then fine-tune to a small dataset, e.g., CIFAR-10.

Another contribution is a demonstration that transfer learning is effective in mitigating overfitting. The recipe is: pretrain on a large image database and then fine-tune to a small dataset, e.g., CIFAR-10.

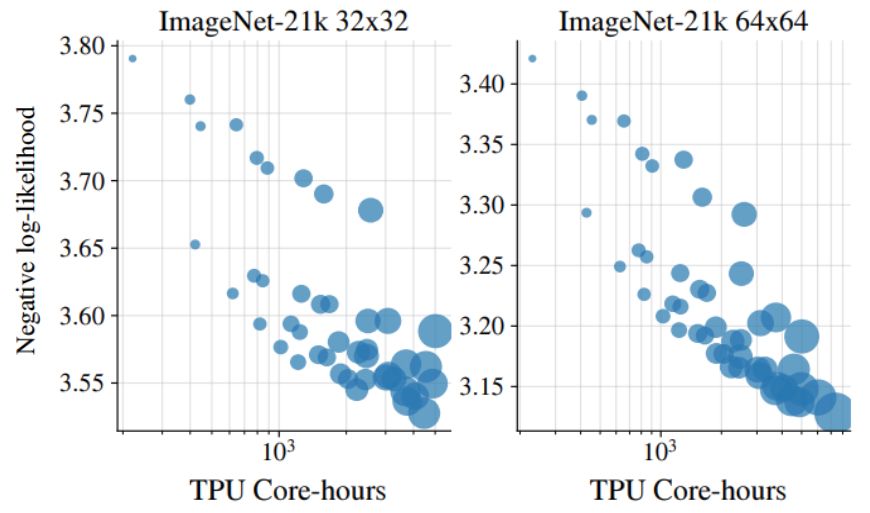

These are the results of varying the Jet model size when training on ImageNet-21k images:

These are the results of varying the Jet model size when training on ImageNet-21k images:

❌ invertible dense layer

❌ ActNorm layer

❌ multiscale latents

❌ dequant. noise

❌ invertible dense layer

❌ ActNorm layer

❌ multiscale latents

❌ dequant. noise

Jet is one of the key components of JetFormer, deserving a standalone report. Let's unpack: 🧵⬇️

Jet is one of the key components of JetFormer, deserving a standalone report. Let's unpack: 🧵⬇️

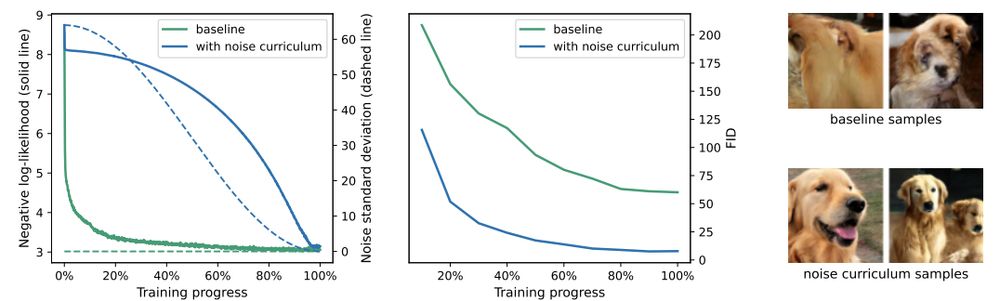

Even though it is inspired by diffusion, it is very different: it only affects training and does not require iterative denoising during inference.

Even though it is inspired by diffusion, it is very different: it only affects training and does not require iterative denoising during inference.

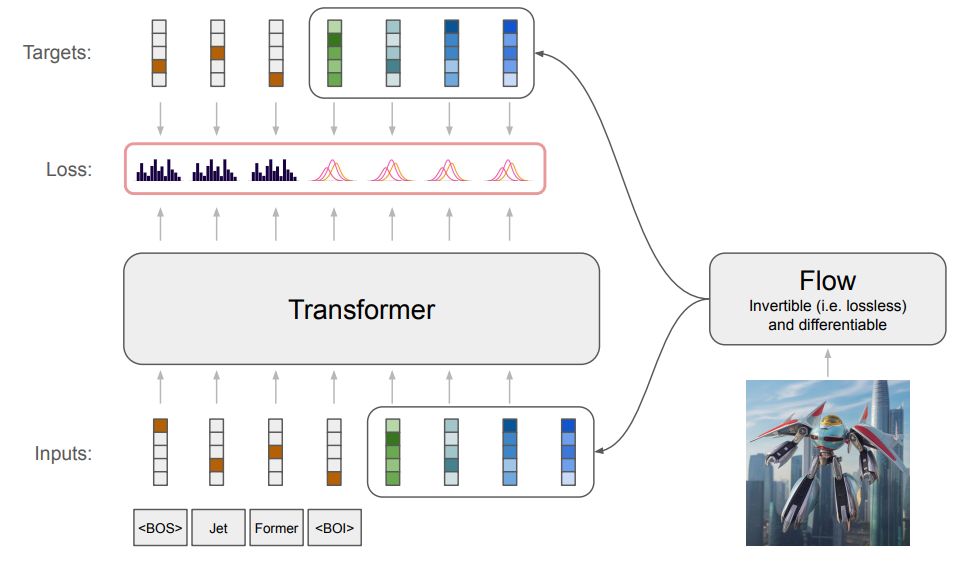

There is a small twist though. An image input is re-encoded with a normalizing flow model, which is trained jointly with the main transformer model.

There is a small twist though. An image input is re-encoded with a normalizing flow model, which is trained jointly with the main transformer model.

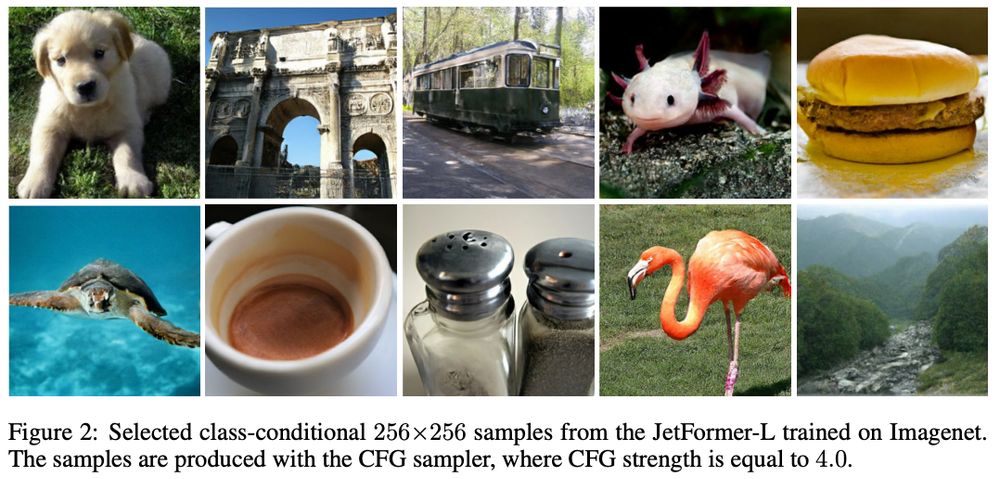

1. optimizes NLL of raw pixel data,

2. generates competitive high-res. natural images,

3. is practical.

But it seemed too good to be true. Until today!

Our new JetFormer model (arxiv.org/abs/2411.19722) ticks on all of these.

🧵

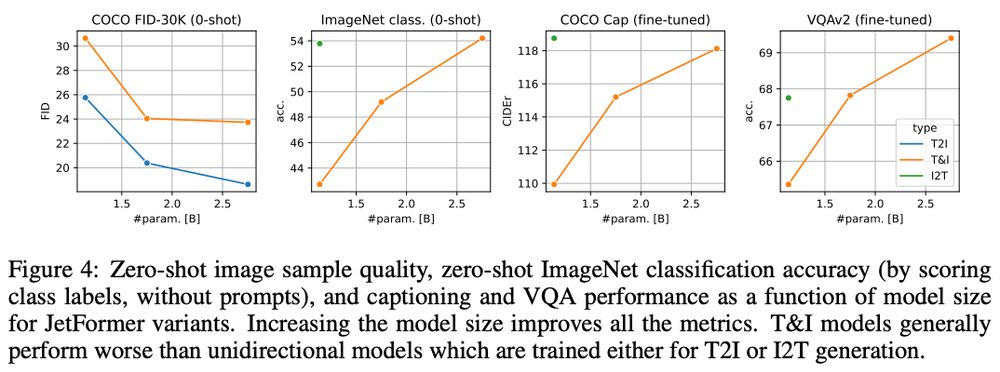

1. optimizes NLL of raw pixel data,

2. generates competitive high-res. natural images,

3. is practical.

But it seemed too good to be true. Until today!

Our new JetFormer model (arxiv.org/abs/2411.19722) ticks on all of these.

🧵