Writes http://interconnects.ai

At Ai2 via HuggingFace, Berkeley, and normal places

Sorry, living under rocks today.

Sorry, living under rocks today.

"a few hours" and the model is on HuggingFace.

www.chinatalk.media/p/the-zai-pl...

"a few hours" and the model is on HuggingFace.

www.chinatalk.media/p/the-zai-pl...

Me:

Me:

> This dataset is provided for...

> Evaluating information retrieval and retrieval augmented generation (RAG) systems.

> It is not intended for: Fine-tuning language models.

??

> This dataset is provided for...

> Evaluating information retrieval and retrieval augmented generation (RAG) systems.

> It is not intended for: Fine-tuning language models.

??

Sorry to all who delayed releases today to get out of our way.

We're hiring.

Sorry to all who delayed releases today to get out of our way.

We're hiring.

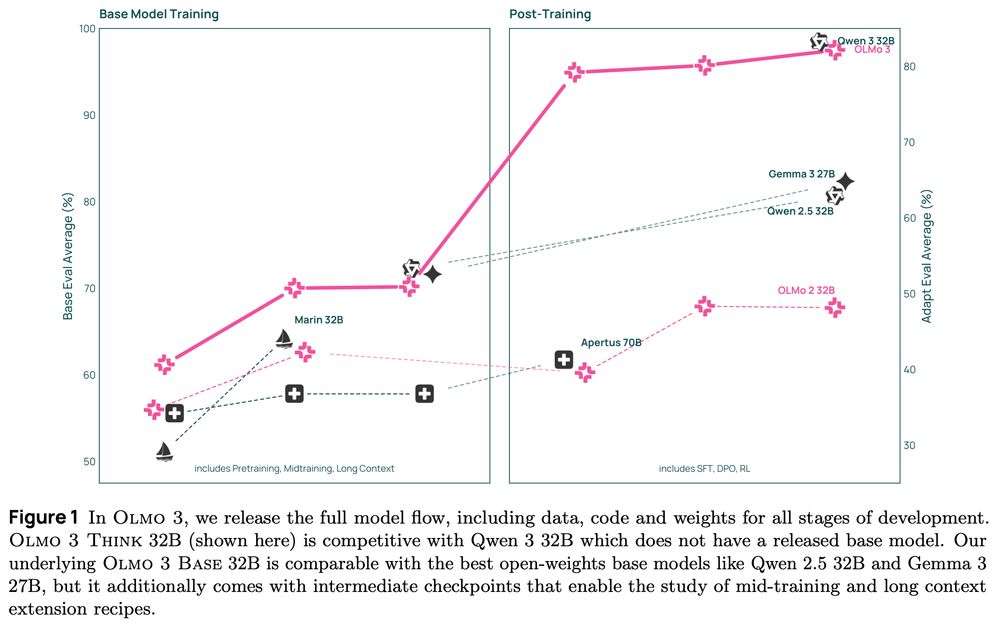

This family of 7B and 32B models represents:

1. The best 32B base model.

2. The best 7B Western thinking & instruct models.

3. The first 32B (or larger) fully open reasoning model.

This family of 7B and 32B models represents:

1. The best 32B base model.

2. The best 7B Western thinking & instruct models.

3. The first 32B (or larger) fully open reasoning model.

scholar.google.com/scholar_labs...

scholar.google.com/scholar_labs...

Excited to land in print in early 2026! Lots of improvements coming soon.

Thanks for the support!

hubs.la/Q03Tc37Q0

Excited to land in print in early 2026! Lots of improvements coming soon.

Thanks for the support!

hubs.la/Q03Tc37Q0

I'm building up a much richer (and direct) understanding of Chinese AI labs. Excited to share more here soon :)

I'm building up a much richer (and direct) understanding of Chinese AI labs. Excited to share more here soon :)

Congrats to the Moonshot AI team on the awesome open release. For close followers of Chinese AI models, this isn't shocking, but more inflection points are coming. Pressure is building on US labs with more expensive models.

www.interconnects.ai/p/kimi-k2-th...

Congrats to the Moonshot AI team on the awesome open release. For close followers of Chinese AI models, this isn't shocking, but more inflection points are coming. Pressure is building on US labs with more expensive models.

www.interconnects.ai/p/kimi-k2-th...

job-boards.greenhouse.io/thealleninst...

job-boards.greenhouse.io/thealleninst...

All models, datasets, code etc released.

Really excited about this project! Sharan, the lead student author, was a joy to work with.

All models, datasets, code etc released.

Really excited about this project! Sharan, the lead student author, was a joy to work with.

They're very neglected vis-a-vis the much easier inference-time scaling plots.

They're very neglected vis-a-vis the much easier inference-time scaling plots.

Excited to see more companies able to train models that suit their needs. Bodes very well for the ecosystem that specific data is stronger than a bigger, general model.

Excited to see more companies able to train models that suit their needs. Bodes very well for the ecosystem that specific data is stronger than a bigger, general model.