Professor of Computer Science at TU Berlin,

3/4

3/4

2/4

2/4

Today, Erik presents a novel attack on Google's latest AI weather model at #CCS2025. By changing only 0.1% of the observations, the attack can fabricate or suppress the prediction of extreme events, from hurricanes 🌀 to heat waves 🔥

1/4 @bifold.berlin

Today, Erik presents a novel attack on Google's latest AI weather model at #CCS2025. By changing only 0.1% of the observations, the attack can fabricate or suppress the prediction of extreme events, from hurricanes 🌀 to heat waves 🔥

1/4 @bifold.berlin

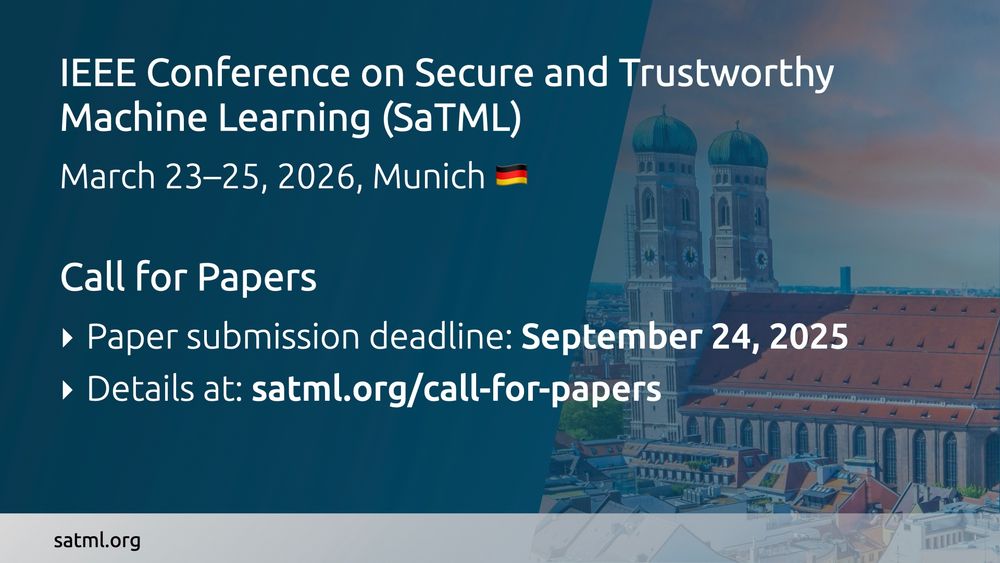

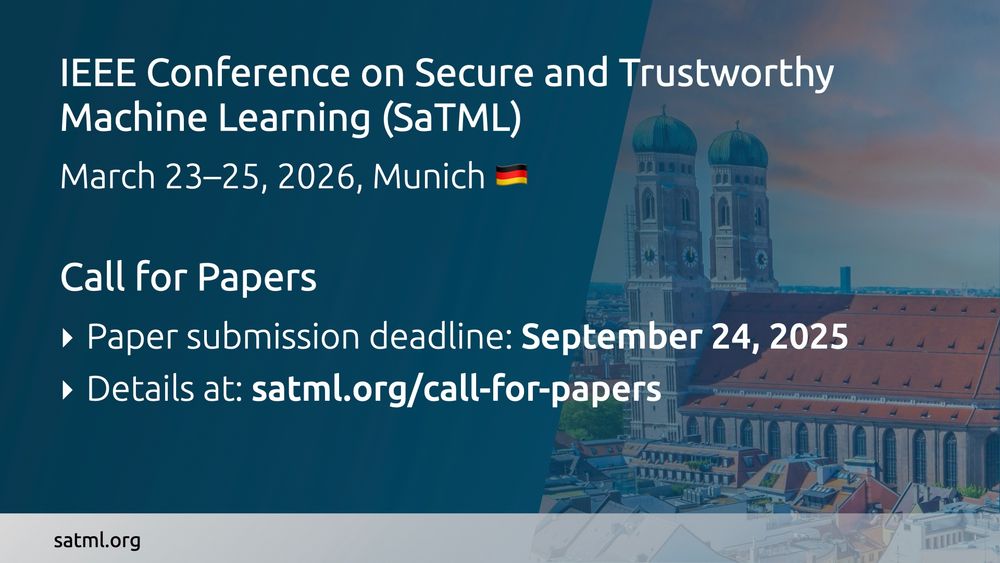

@satml.org has you covered! We are eager to read your work on the security, privacy, and fairness of AI.

👉 satml.org/call-for-pap...

⏰ Deadline: Sep 24

@satml.org has you covered! We are eager to read your work on the security, privacy, and fairness of AI.

👉 satml.org/call-for-pap...

⏰ Deadline: Sep 24

The @satml.org paper deadline is just 9 days away. We are looking forward to your work on security, privacy, and fairness in machine learning.

👉 satml.org/call-for-pap...

⏰ Sep 24

The @satml.org paper deadline is just 9 days away. We are looking forward to your work on security, privacy, and fairness in machine learning.

👉 satml.org/call-for-pap...

⏰ Sep 24

🗓️ Deadline: Sept 24, 2025

We have also updated our Call for Papers with a statement on LLM usage, check it out:

👉 satml.org/call-for-pap...

@satml.org

🗓️ Deadline: Sept 24, 2025

We have also updated our Call for Papers with a statement on LLM usage, check it out:

👉 satml.org/call-for-pap...

@satml.org

@satml.org is now accepting proposals for its Competition Track! Showcase your challenge and engage the community.

👉 satml.org/call-for-com...

🗓️ Deadline: Aug 6

@satml.org is now accepting proposals for its Competition Track! Showcase your challenge and engage the community.

👉 satml.org/call-for-com...

🗓️ Deadline: Aug 6

2/4

2/4

@icmlconf.bsky.social!

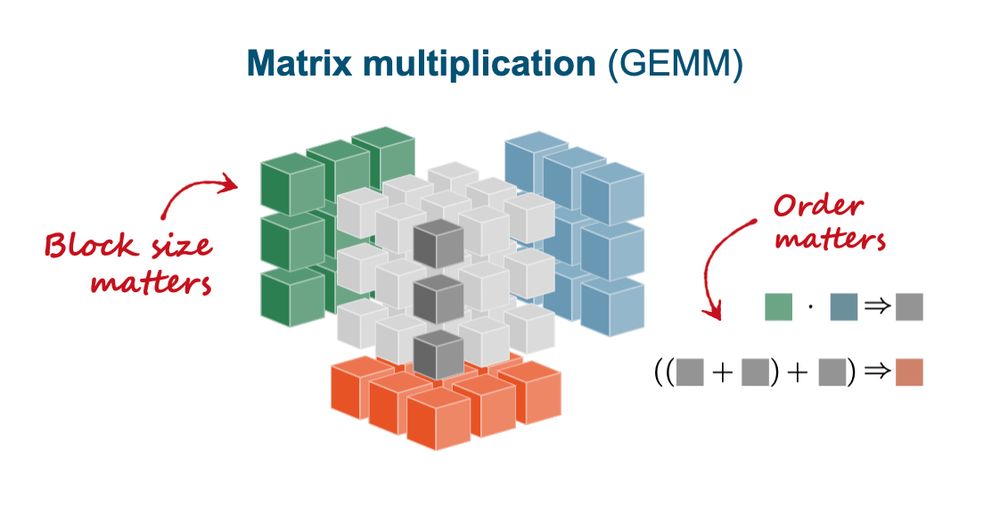

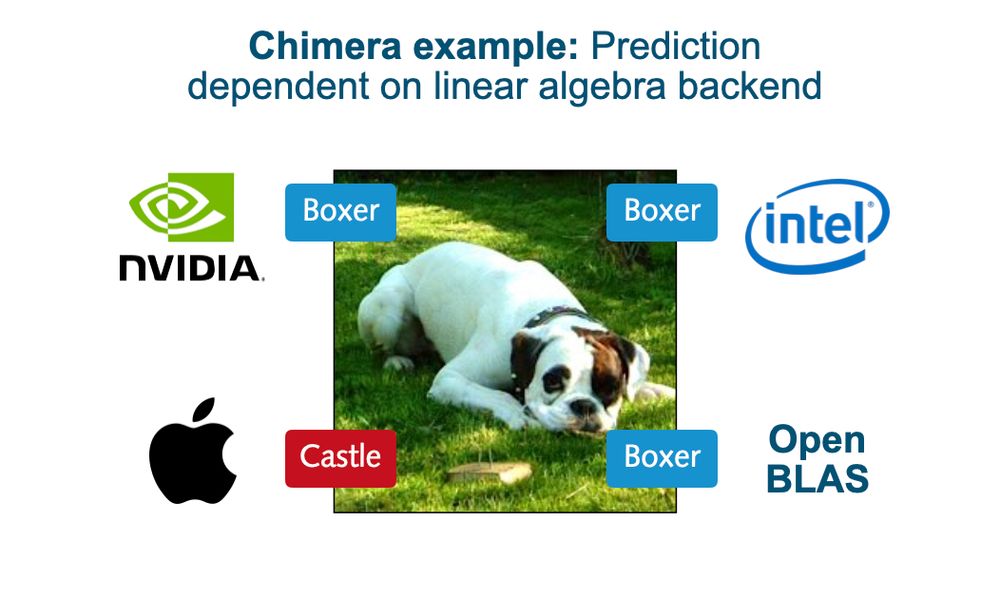

We exploit subtle numerical differences between linear algebra backends and craft inputs that yield different predictions from the same model depending on the backend used 🤯 mlsec.org/docs/2025-ic...

1/4

@icmlconf.bsky.social!

We exploit subtle numerical differences between linear algebra backends and craft inputs that yield different predictions from the same model depending on the backend used 🤯 mlsec.org/docs/2025-ic...

1/4

@satml.org

The competition track has been a highlight of SaTML, featuring exciting topics and strong participation. If you’d like to host one for SaTML 2026, visit:

👉 satml.org/call-for-com...

⏰ Deadline: Aug 6

@satml.org

The competition track has been a highlight of SaTML, featuring exciting topics and strong participation. If you’d like to host one for SaTML 2026, visit:

👉 satml.org/call-for-com...

⏰ Deadline: Aug 6

We seek papers on secure, private, and fair learning algorithms and systems.

👉 satml.org/call-for-pap...

⏰ Deadline: Sept 24

We seek papers on secure, private, and fair learning algorithms and systems.

👉 satml.org/call-for-pap...

⏰ Deadline: Sept 24

Join us at SaTML 2025, the premier conference on AI security, AI privacy, and AI fairness!

👉 satml.org/attend

@satml.org

Join us at SaTML 2025, the premier conference on AI security, AI privacy, and AI fairness!

👉 satml.org/attend

@satml.org

2/3

2/3

Today, Julian presents our work on implanting machine learning backdoors in hardware at @acsacconf.bsky.social. Our backdoors reside within a hardware ML accelerator, manipulating models on-the-fly and invisible from outside.

mlsec.org/docs/2024-ac...

1/3

Today, Julian presents our work on implanting machine learning backdoors in hardware at @acsacconf.bsky.social. Our backdoors reside within a hardware ML accelerator, manipulating models on-the-fly and invisible from outside.

mlsec.org/docs/2024-ac...

1/3

Registration for IEEE SaTML is now open: satml.org

We are also offering travel scholarships: satml.org/scholarships/

Registration for IEEE SaTML is now open: satml.org

We are also offering travel scholarships: satml.org/scholarships/

👉 satml.org/keynotes/

Don’t miss out on #SaTML2025 in Copenhagen🇩🇰, April 2025!

👉 satml.org/keynotes/

Don’t miss out on #SaTML2025 in Copenhagen🇩🇰, April 2025!

Two weeks until the SATML paper deadline! We’re eager to see your work on secure, private, and fair machine learning, as well as any other aspects of machine learning system security.

👉 satml.org/participate-...

⏰ Deadline: Sep 18

Two weeks until the SATML paper deadline! We’re eager to see your work on secure, private, and fair machine learning, as well as any other aspects of machine learning system security.

👉 satml.org/participate-...

⏰ Deadline: Sep 18

You can find all competitions and their websites here:

satml.org/competitions/

You can find all competitions and their websites here:

satml.org/competitions/

We seek papers on secure, private, and fair machine learning algorithms and systems.

👉 satml.org/participate-...

⏰ Deadline: Sep 18

We seek papers on secure, private, and fair machine learning algorithms and systems.

👉 satml.org/participate-...

⏰ Deadline: Sep 18