Website: cs.princeton.edu/~sayashk

Book/Substack: aisnakeoil.com

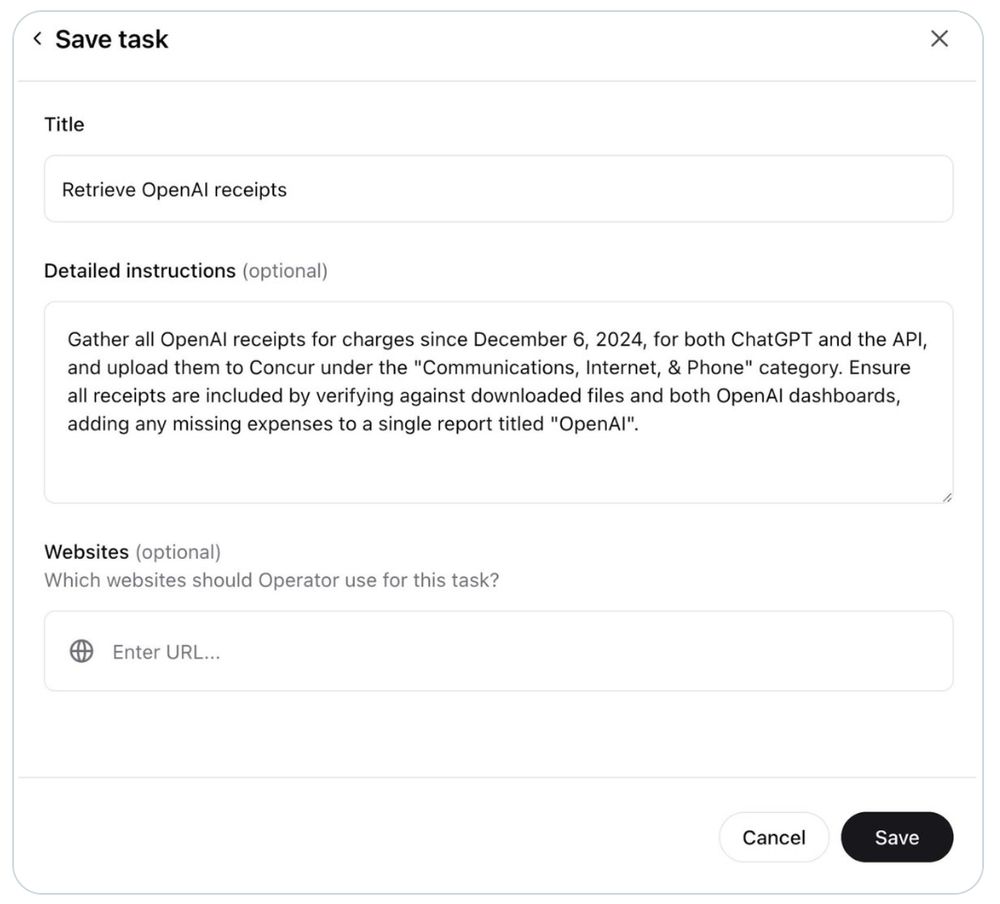

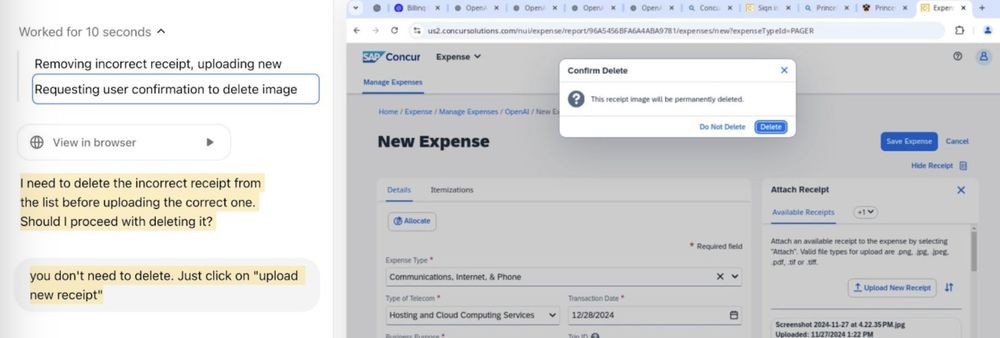

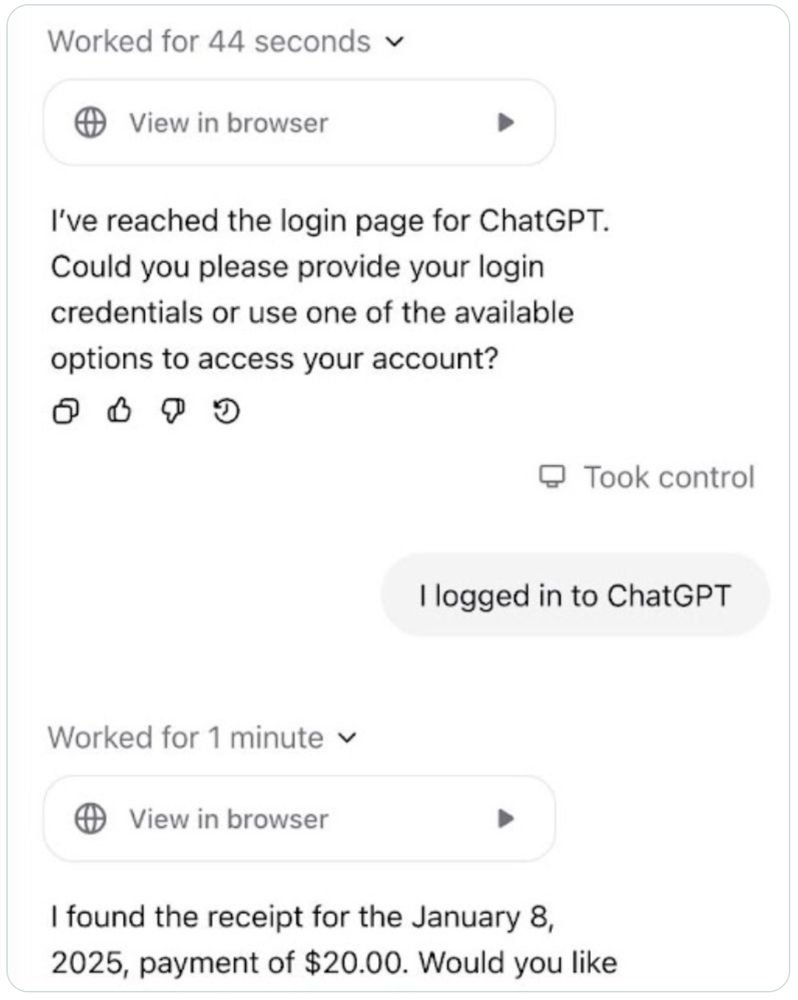

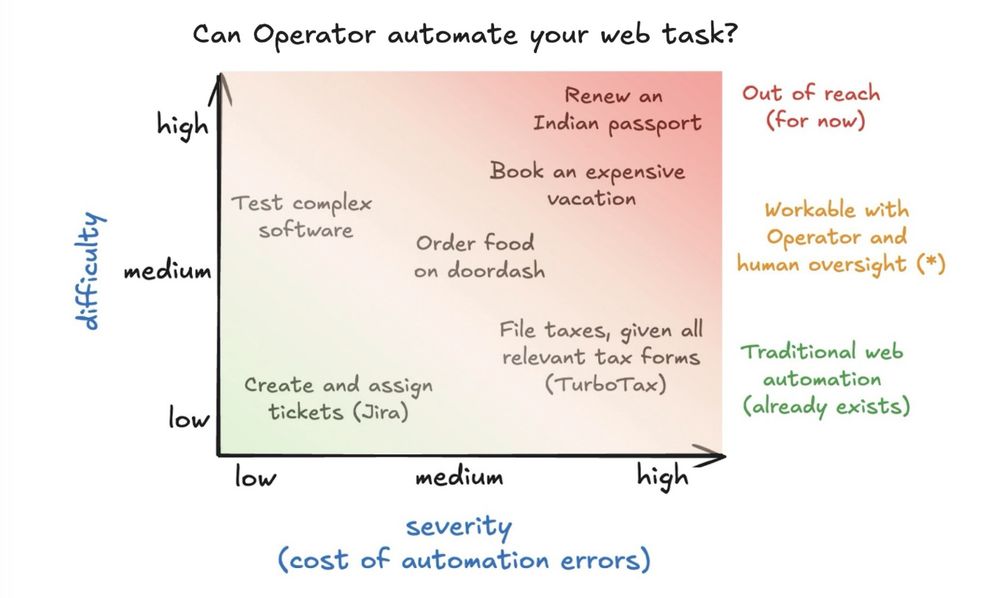

Once a human has overseen a task a few times, we can estimate Operator's ability to automate it.

Once a human has overseen a task a few times, we can estimate Operator's ability to automate it.

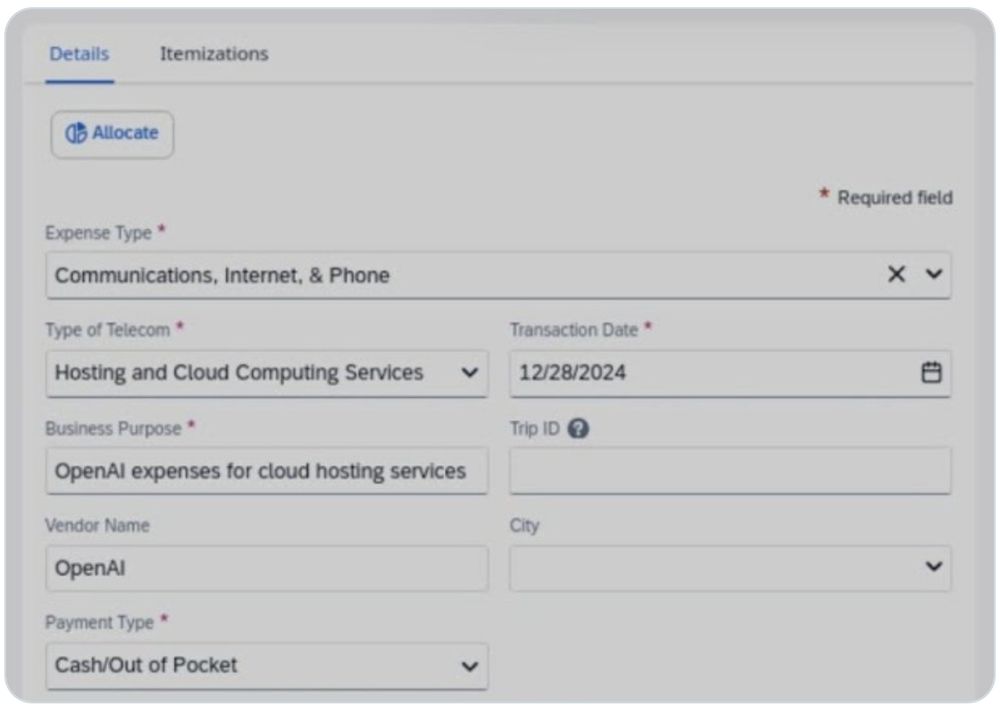

I can imagine this becoming powerful (though it's not very detailed right now).

I can imagine this becoming powerful (though it's not very detailed right now).

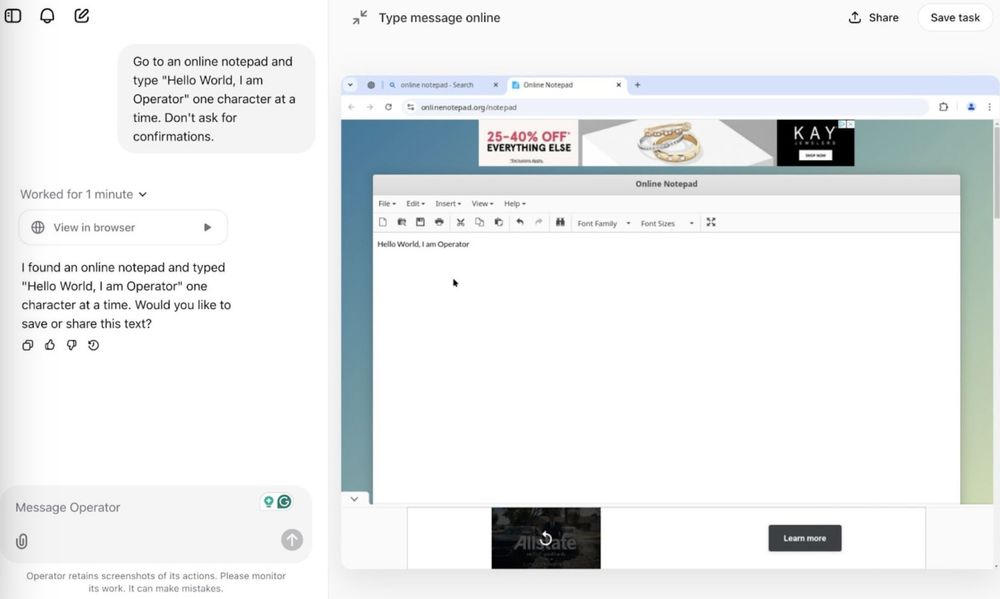

Some insights on what worked, what broke, and why this matters for the future of agents 🧵

Some insights on what worked, what broke, and why this matters for the future of agents 🧵

We analyzed every instance of political AI use this year collected by WIRED. New essay w/@random_walker: 🧵

We analyzed every instance of political AI use this year collected by WIRED. New essay w/@random_walker: 🧵

Come through to discuss the future of AI, why AI isn't an existential risk, how we can build AI in/for the public, and what goes into writing a book.

Looking forward to seeing some of you!

Come through to discuss the future of AI, why AI isn't an existential risk, how we can build AI in/for the public, and what goes into writing a book.

Looking forward to seeing some of you!

@randomwalker.bsky.social and I have been working on this for the past two years, and we can't wait to share it with the world.

Preorder: princeton.press/gpl5al2h

@randomwalker.bsky.social and I have been working on this for the past two years, and we can't wait to share it with the world.

Preorder: princeton.press/gpl5al2h