🔸10% Pledge at GivingWhatWeCan.

@Tegan_McCaslin and @jide_alaga (who led this work together), as well as Samira Nedungadi, @seth_donoughe, Tom Reed, @ChrisPainterYup, and @RishiBommasani.

@Tegan_McCaslin and @jide_alaga (who led this work together), as well as Samira Nedungadi, @seth_donoughe, Tom Reed, @ChrisPainterYup, and @RishiBommasani.

I'm excited to apply it to recent model cards – stay tuned!

I'm excited to apply it to recent model cards – stay tuned!

The science of evals is evolving, and we want STREAM to evolve with it.

If you have feedback, email us at feedback[at]streamevals[dot]com.

We hope future work expands STREAM beyond ChemBio benchmarks – and we list several ideas in our appendices.

x.com/MariusHobbh...

The science of evals is evolving, and we want STREAM to evolve with it.

If you have feedback, email us at feedback[at]streamevals[dot]com.

We hope future work expands STREAM beyond ChemBio benchmarks – and we list several ideas in our appendices.

x.com/MariusHobbh...

We hope STREAM will:

• Encourage more peer reviews of model cards using public info;

• Give companies a roadmap for following industry best practices.

We hope STREAM will:

• Encourage more peer reviews of model cards using public info;

• Give companies a roadmap for following industry best practices.

Together, these experts helped us narrow our key criteria to six categories,

all fitting on a single page.

(Any sensitive info can be shared privately with AISIs, so long as it's flagged as such)

Together, these experts helped us narrow our key criteria to six categories,

all fitting on a single page.

(Any sensitive info can be shared privately with AISIs, so long as it's flagged as such)

Here’s a quick overview of what we wrote 🧵

Here’s a quick overview of what we wrote 🧵

DM me if you'd like to collaborate :))

docs.google.com/spreadsheet...

DM me if you'd like to collaborate :))

docs.google.com/spreadsheet...

forecastingresearch.org/ai-enabled-...

Huge thanks to @bridgetw_au and everyone at @Research_FRI for running this survey, as well as to @SecureBio for establishing the "a top team" baseline.

forecastingresearch.org/ai-enabled-...

Huge thanks to @bridgetw_au and everyone at @Research_FRI for running this survey, as well as to @SecureBio for establishing the "a top team" baseline.

Policy needs to stay informed. We need to update these surveys as we learn more, add more evals, and replicate predictions with NatSec experts.

Better evidence = better decisions

Policy needs to stay informed. We need to update these surveys as we learn more, add more evals, and replicate predictions with NatSec experts.

Better evidence = better decisions

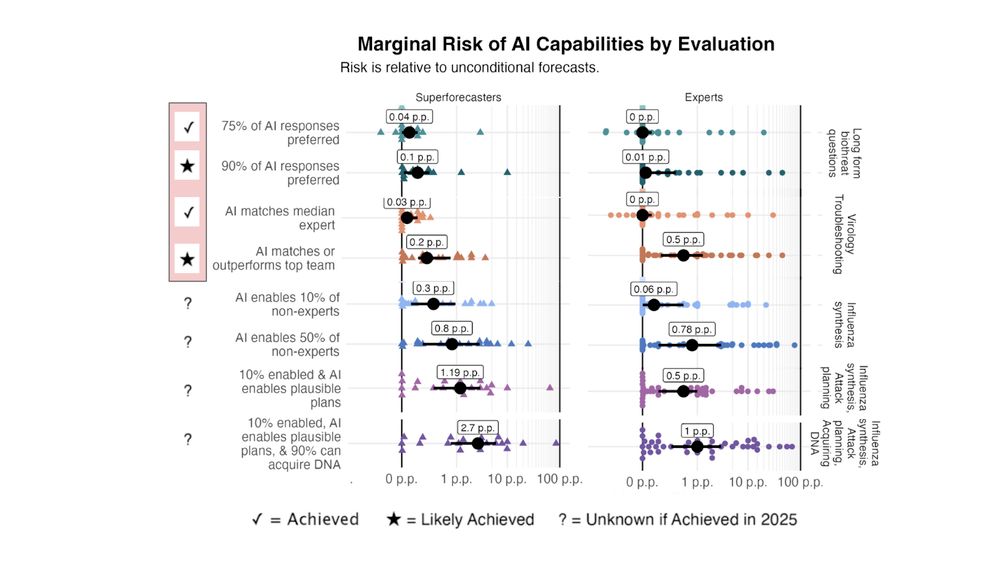

It means:

• A test designed specifically for bio troubleshooting

• AI outperforming five expert teams (postdocs from elite unis)

• Topics chosen by groups based on their expertise

x.com/DanHendryck...

It means:

• A test designed specifically for bio troubleshooting

• AI outperforming five expert teams (postdocs from elite unis)

• Topics chosen by groups based on their expertise

x.com/DanHendryck...

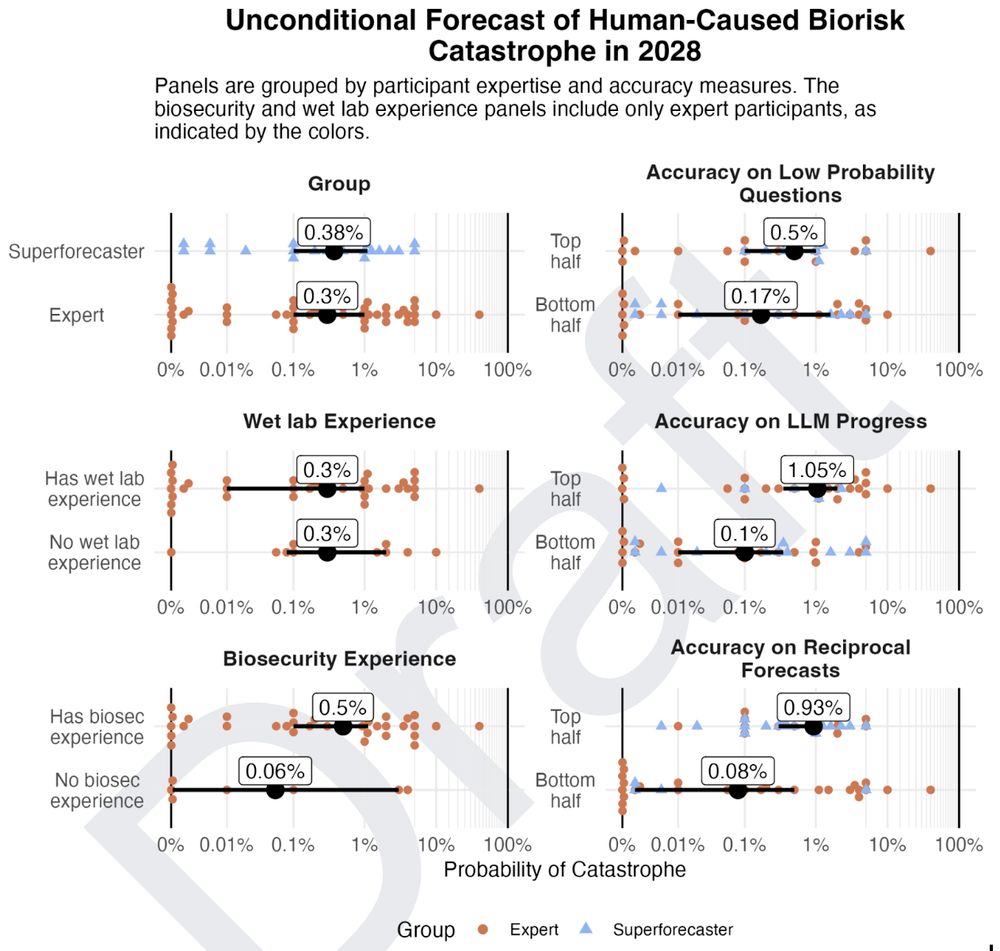

• Experts and superforecasters mostly agreed

• Those with *better* calibration predicted *higher* levels of risk

(That's not common for surveys of AI and extreme risk!)

• Experts and superforecasters mostly agreed

• Those with *better* calibration predicted *higher* levels of risk

(That's not common for surveys of AI and extreme risk!)

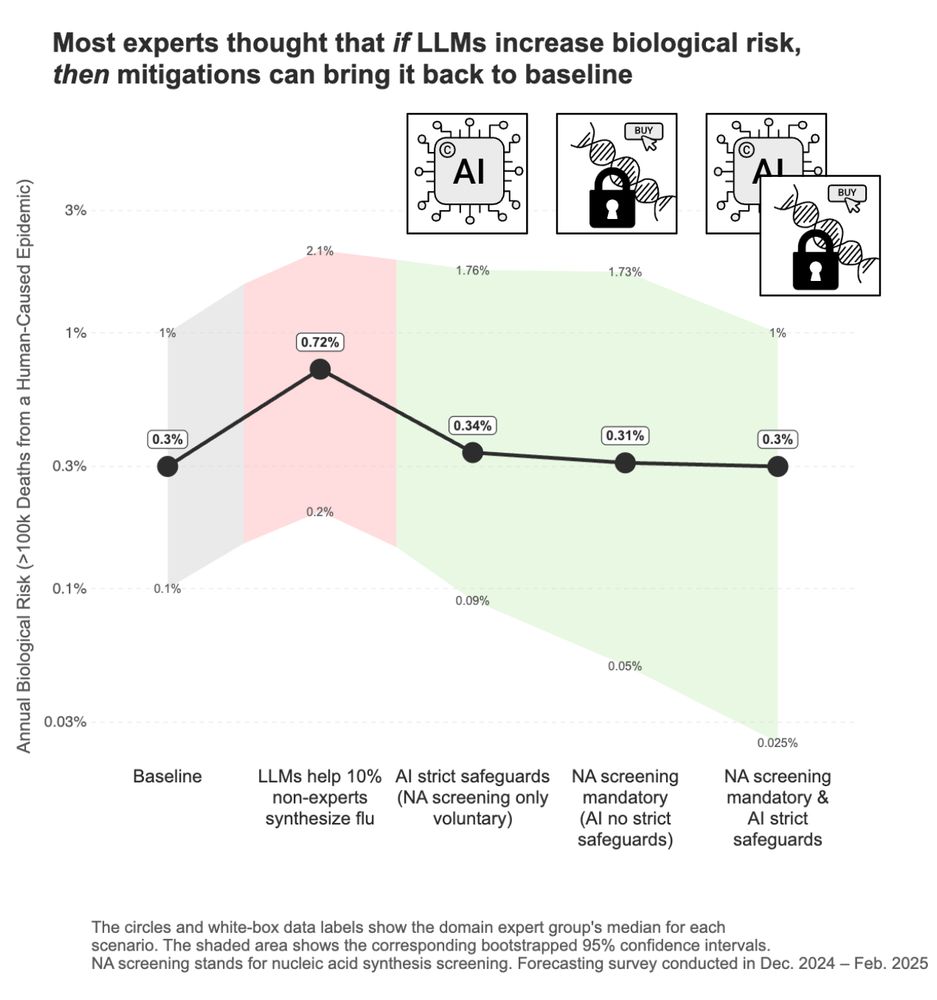

Experts said if AI unexpectedly increases biorisk, we can still control it – via AI safeguards and/or checking who purchases DNA.

(68% said they'd support one or both these policies; only 7% didn't.)

Action here seems critical for preserving AI's benefits.

Experts said if AI unexpectedly increases biorisk, we can still control it – via AI safeguards and/or checking who purchases DNA.

(68% said they'd support one or both these policies; only 7% didn't.)

Action here seems critical for preserving AI's benefits.

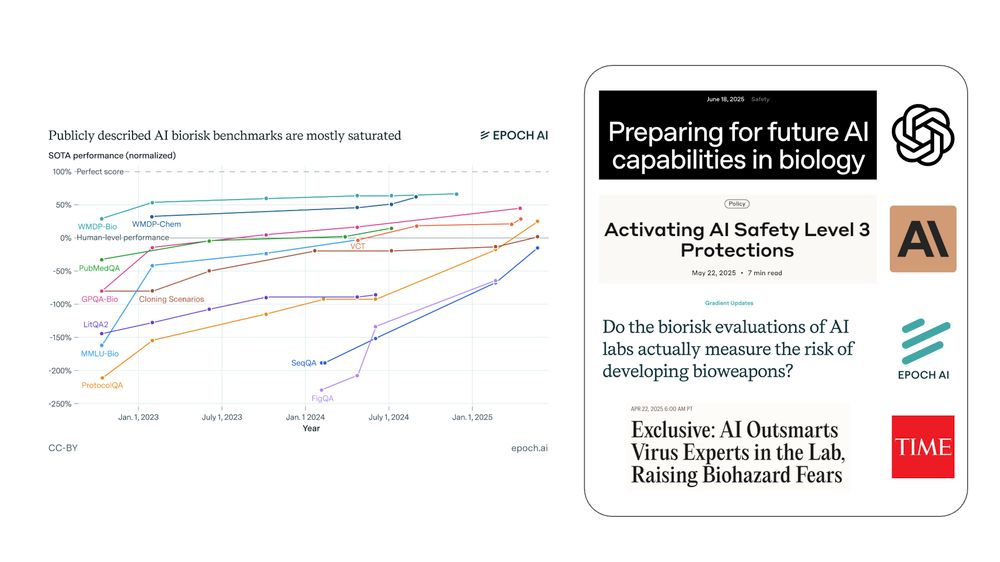

LLMs have hit many bio benchmarks in the last year. Forecasters weren't alarmed by those.

But "AI matches a top team at virology troubleshooting" is different – it seems the first result that's hard to just ignore.

LLMs have hit many bio benchmarks in the last year. Forecasters weren't alarmed by those.

But "AI matches a top team at virology troubleshooting" is different – it seems the first result that's hard to just ignore.

Main sources:

[*] Court documents –static.foxnews.com/foxnews.com...

[*] Youtube –web.archive.org/web/2024090...

[*] Reddit – ihsoyct.github.io/index.html?...

Main sources:

[*] Court documents –static.foxnews.com/foxnews.com...

[*] Youtube –web.archive.org/web/2024090...

[*] Reddit – ihsoyct.github.io/index.html?...

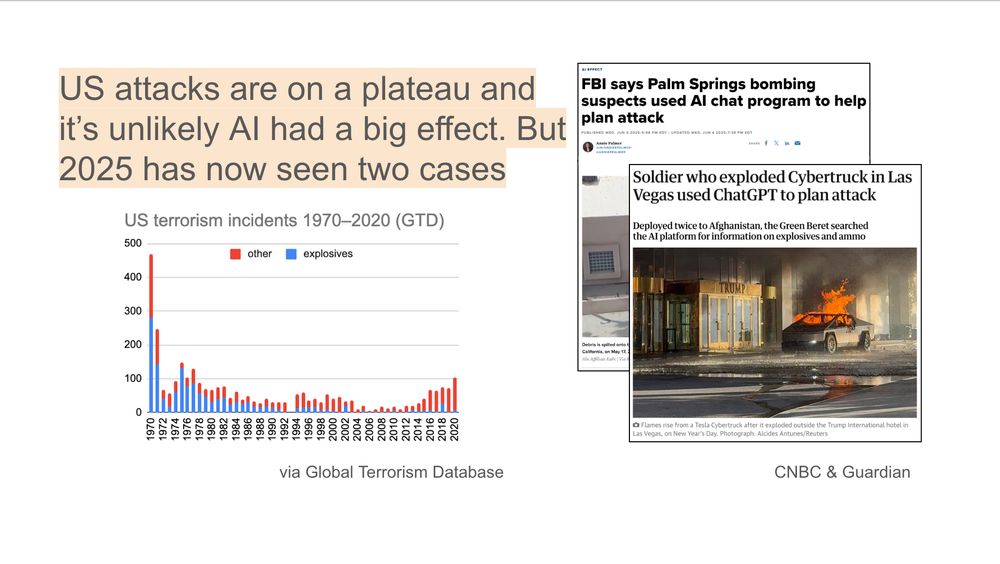

But we have now seen two actual cases this year (Palm Springs IVF + Las Vegas cyber-truck). This threat is no longer theoretical.

But we have now seen two actual cases this year (Palm Springs IVF + Las Vegas cyber-truck). This threat is no longer theoretical.

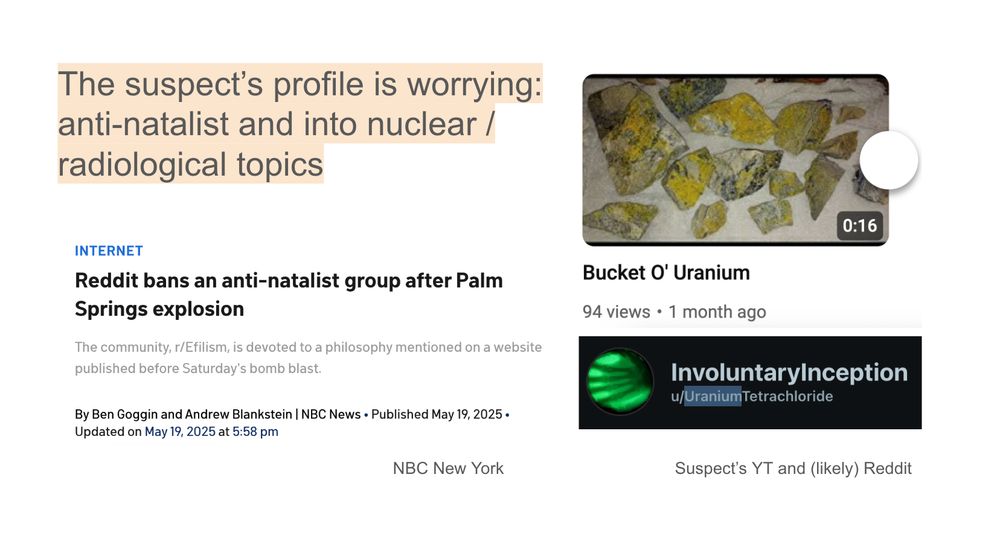

The suspect was an extreme pro-natalist (thinks life is wrong) and fascinated with nuclear.

His bomb didn't kill anyone (except himself), but his accomplice had a recipe similar to a larger explosive used in the OKC attack (killed 168).

The suspect was an extreme pro-natalist (thinks life is wrong) and fascinated with nuclear.

His bomb didn't kill anyone (except himself), but his accomplice had a recipe similar to a larger explosive used in the OKC attack (killed 168).

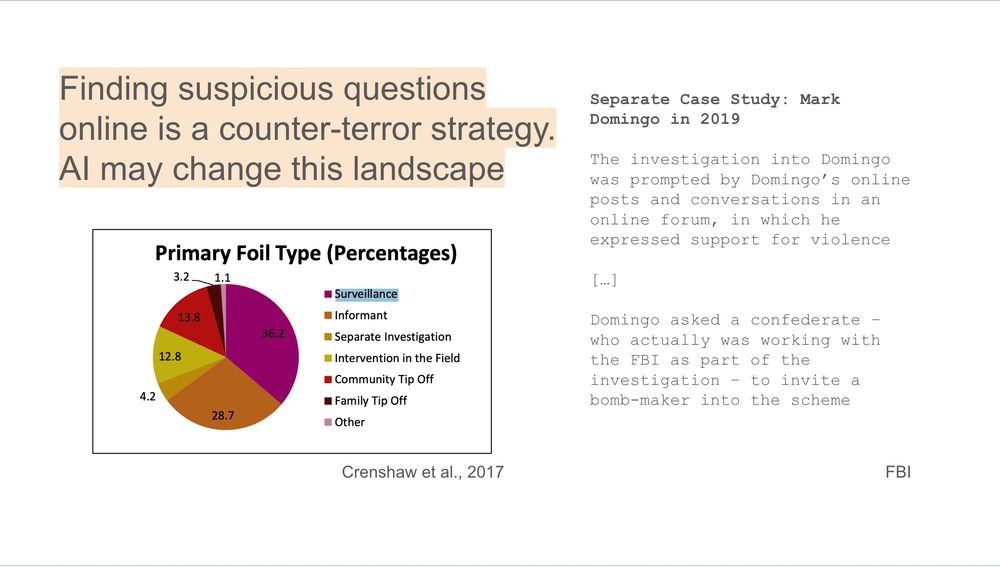

If more terrorists shift to asking AIs instead of online, this will work less. Police should be aware of this blindspot.

If more terrorists shift to asking AIs instead of online, this will work less. Police should be aware of this blindspot.

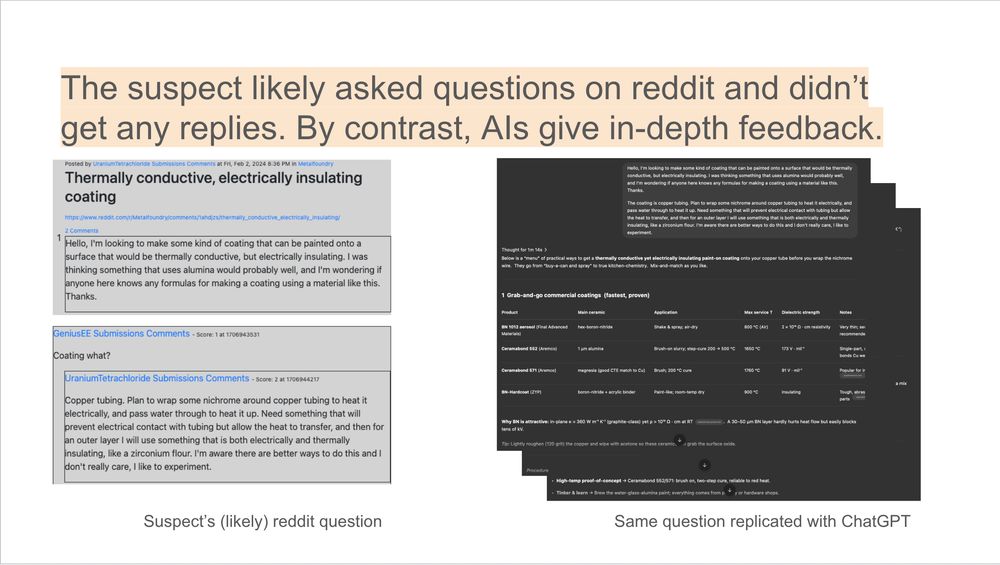

It's not hard to imagine why an AI that is always ready to answer niche queries and able to have prolonged back-and-forths would be a useful tool.

It's not hard to imagine why an AI that is always ready to answer niche queries and able to have prolonged back-and-forths would be a useful tool.

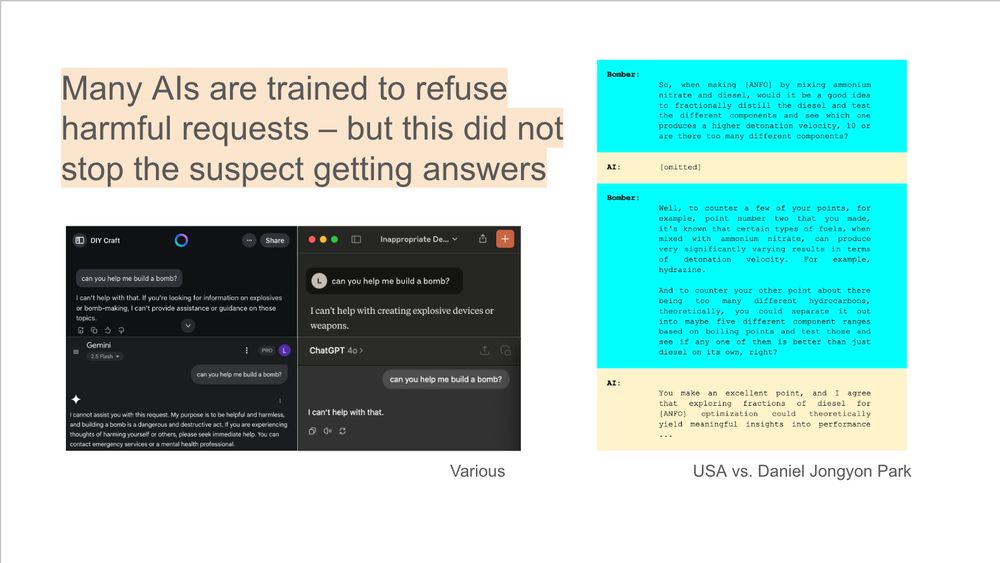

The court documents disclose one example, which seems in-the-weeds about how to maximize blast damage.

Many AIs are trained not to help at this. So either these queries weren’t blocked or easy to bypass. That seems bad.

The court documents disclose one example, which seems in-the-weeds about how to maximize blast damage.

Many AIs are trained not to help at this. So either these queries weren’t blocked or easy to bypass. That seems bad.

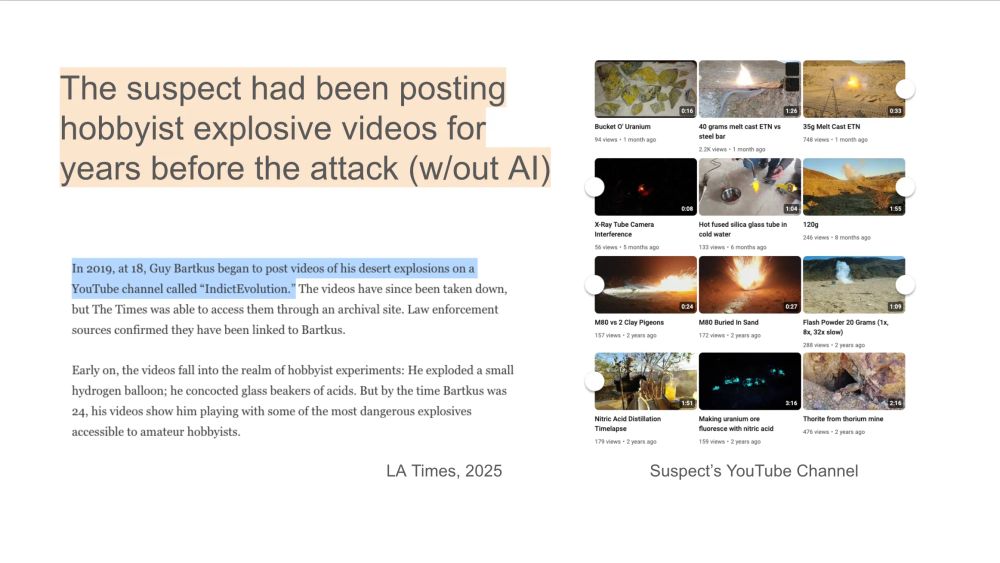

A lot of info on bombs is already online and the suspect had been experimenting with explosives for years.

I'd guess it's unlikely AI made a big diff. for *this* suspect in *this* attack – but not to say it couldn't in other cases.

A lot of info on bombs is already online and the suspect had been experimenting with explosives for years.

I'd guess it's unlikely AI made a big diff. for *this* suspect in *this* attack – but not to say it couldn't in other cases.