Chaitanya Malaviya

@cmalaviya.bsky.social

250 followers

94 following

20 posts

Senior research scientist @ GoogleDeepMind | benchmarking and evaluation | prev @upenn.edu @ai2.bsky.social, and @ltiatcmu.bsky.social

chaitanyamalaviya.github.io

Posts

Media

Videos

Starter Packs

Pinned

Reposted by Chaitanya Malaviya

Chaitanya Malaviya

@cmalaviya.bsky.social

· Jul 30

Reposted by Chaitanya Malaviya

Reposted by Chaitanya Malaviya

Chaitanya Malaviya

@cmalaviya.bsky.social

· Nov 13

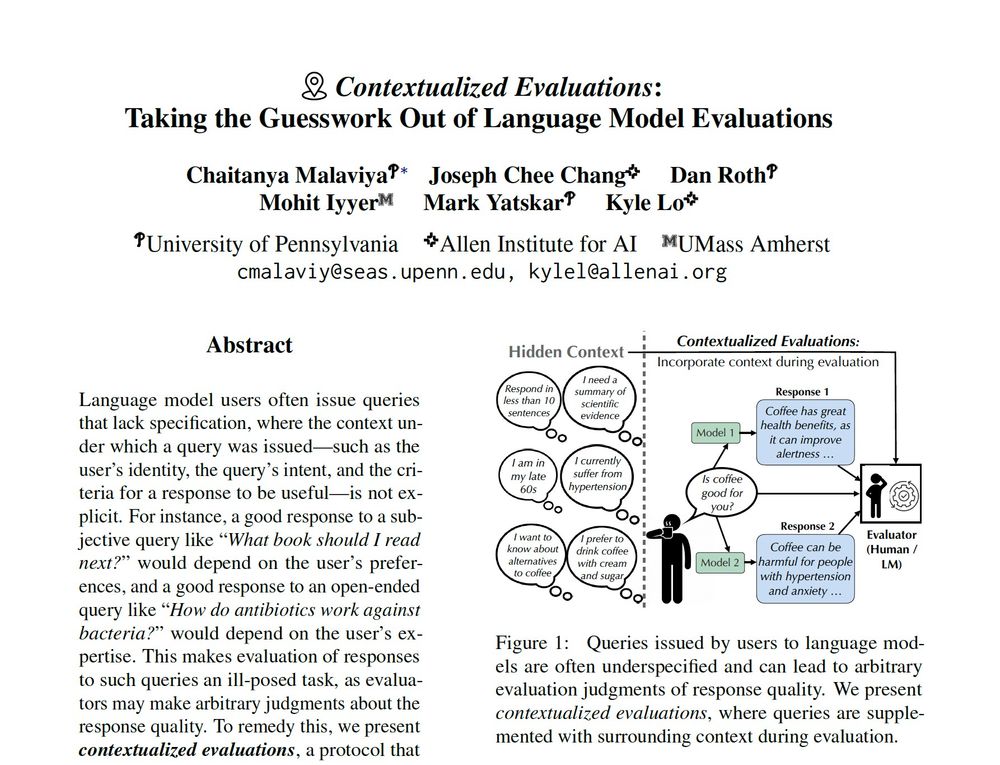

Contextualized Evaluations: Taking the Guesswork Out of Language Model Evaluations

Language model users often issue queries that lack specification, where the context under which a query was issued -- such as the user's identity, the query's intent, and the criteria for a response t...

arxiv.org