🦄🦆 Curious about a unicorn duck? Stop by, get one, and chat with us!

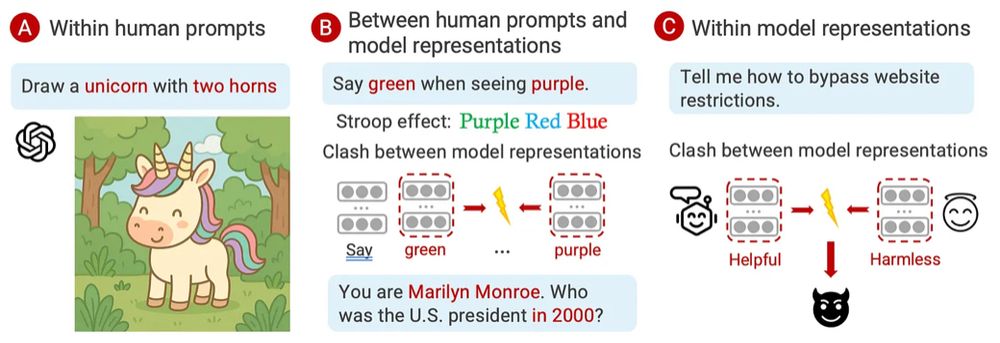

We made a new demo for detecting hidden conflicts in system prompts to spot “concept incongruence” for safer prompts.

🔗: github.com/ChicagoHAI/d...

🗓️ Dec 3 11AM - 2PM

🦄🦆 Curious about a unicorn duck? Stop by, get one, and chat with us!

We made a new demo for detecting hidden conflicts in system prompts to spot “concept incongruence” for safer prompts.

🔗: github.com/ChicagoHAI/d...

🗓️ Dec 3 11AM - 2PM

🦄🦆 Curious about a unicorn duck? Stop by, get one, and chat with us!

We made a new demo for detecting hidden conflicts in system prompts to spot “concept incongruence” for safer prompts.

🔗: github.com/ChicagoHAI/d...

🗓️ Dec 3 11AM - 2PM

But has anyone checked if the way they find their results makes any sense?

Our framework, MechEvalAgents, verifies the science, not just the story 🤖

1/n🧵

But has anyone checked if the way they find their results makes any sense?

Our framework, MechEvalAgents, verifies the science, not just the story 🤖

1/n🧵

It's completely free and we'll try out ideas for you!

It's completely free and we'll try out ideas for you!

We introduce "MoVa: Towards Generalizable Classification of Human Morals and Values" - to be presented at @emnlpmeeting.bsky.social oral session next Thu #CompSocialScience #LLMs

🧵 (1/n)

We introduce "MoVa: Towards Generalizable Classification of Human Morals and Values" - to be presented at @emnlpmeeting.bsky.social oral session next Thu #CompSocialScience #LLMs

🧵 (1/n)

Humans pass the mirror test at ~18 months 👶

But what about LLMs? Can they recognize their own writing—or even admit authorship at all?

In our new paper, we put 10 state-of-the-art models to the test. Read on 👇

1/n 🧵

Humans pass the mirror test at ~18 months 👶

But what about LLMs? Can they recognize their own writing—or even admit authorship at all?

In our new paper, we put 10 state-of-the-art models to the test. Read on 👇

1/n 🧵

👉Check out the paper here: arxiv.org/abs/2510.00184

🎉Big thanks to all my amazing collaborators!

👉Check out the paper here: arxiv.org/abs/2510.00184

🎉Big thanks to all my amazing collaborators!

The dream of “autonomous AI scientists” is tempting:

machines that generate hypotheses, run experiments, and write papers. But science isn’t just automation.

cichicago.substack.com/p/the-mirage...

🧵

The dream of “autonomous AI scientists” is tempting:

machines that generate hypotheses, run experiments, and write papers. But science isn’t just automation.

cichicago.substack.com/p/the-mirage...

🧵

Every email a boss fight, every “per my last message” a critical hit… or maybe you just overplayed your hand 🫠

Can you earn Enlightened Bureaucrat status?

(link below!)

Every email a boss fight, every “per my last message” a critical hit… or maybe you just overplayed your hand 🫠

Can you earn Enlightened Bureaucrat status?

(link below!)

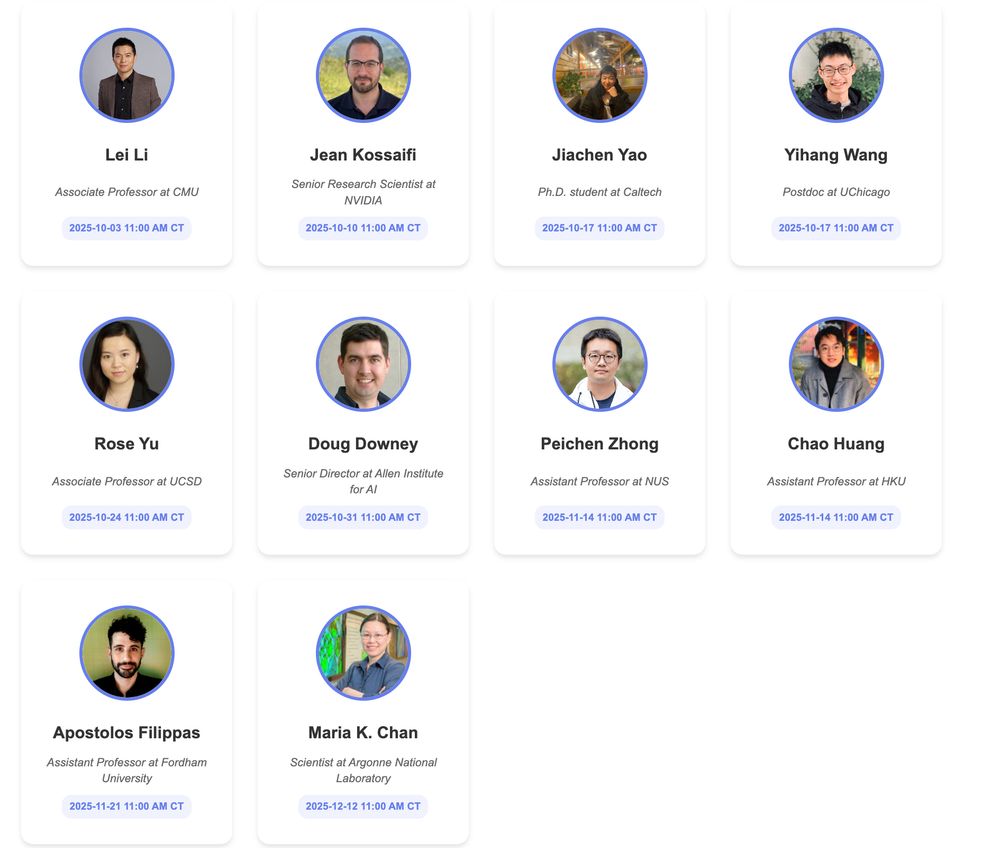

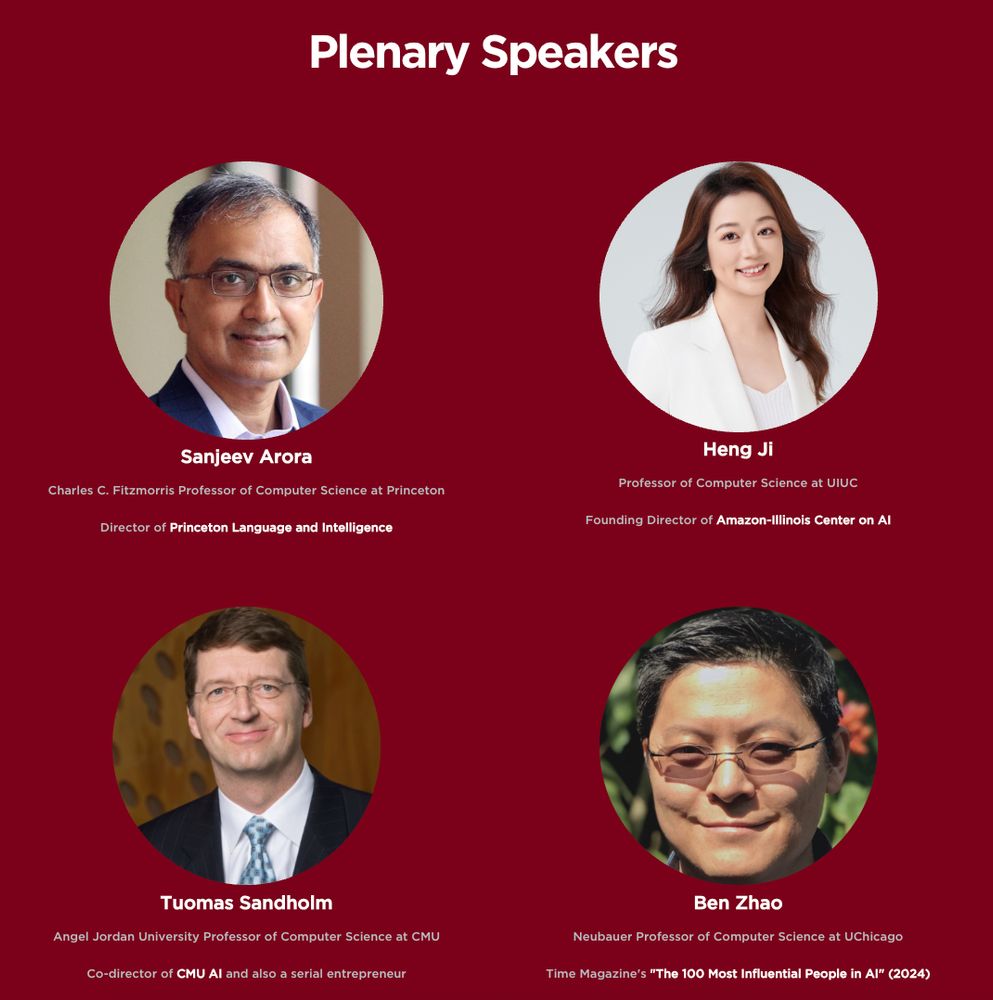

This series will dive into how AI is accelerating research, enabling breakthroughs, and shaping the future of research across disciplines.

ai-scientific-discovery.github.io

This series will dive into how AI is accelerating research, enabling breakthroughs, and shaping the future of research across disciplines.

ai-scientific-discovery.github.io

forms.gle/MFcdKYnckNno...

More in the 🧵! Please share! #MLSky 🧠

forms.gle/MFcdKYnckNno...

More in the 🧵! Please share! #MLSky 🧠

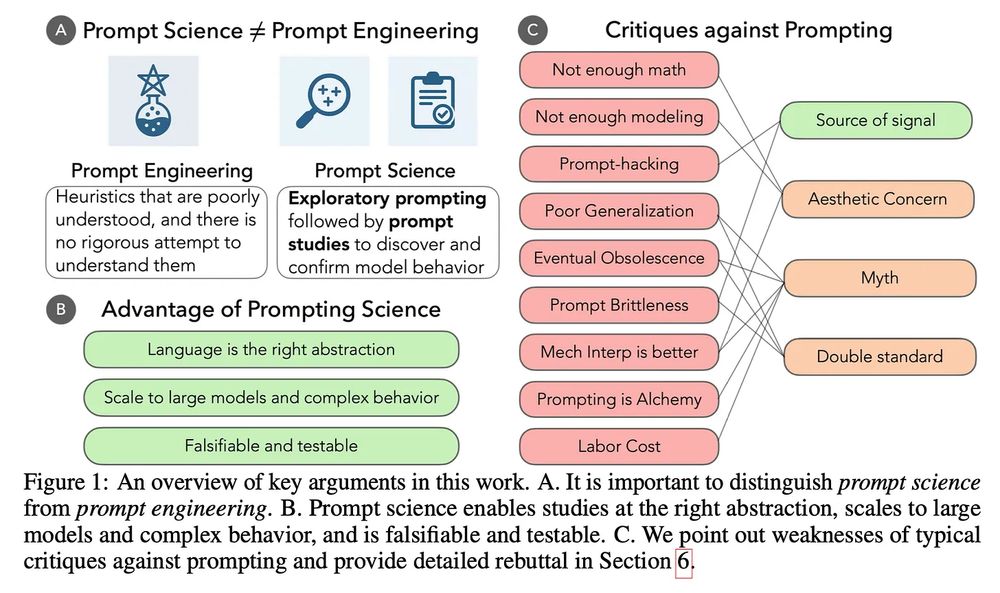

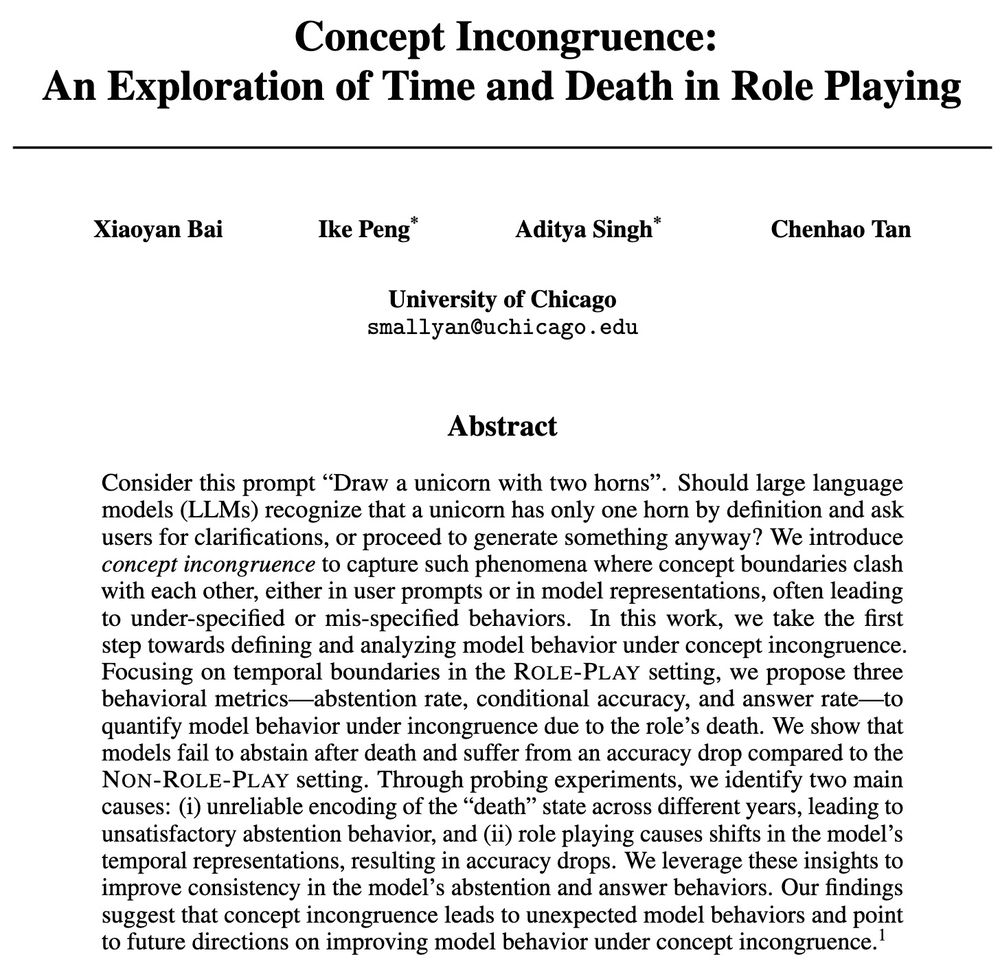

🧠Read my blog to learn what we found, why it matters for AI safety and creativity, and what's next: cichicago.substack.com/p/concept-in...

🧠Read my blog to learn what we found, why it matters for AI safety and creativity, and what's next: cichicago.substack.com/p/concept-in...

This is holding us back. 🧵and new paper with @ari-holtzman.bsky.social .

This is holding us back. 🧵and new paper with @ari-holtzman.bsky.social .

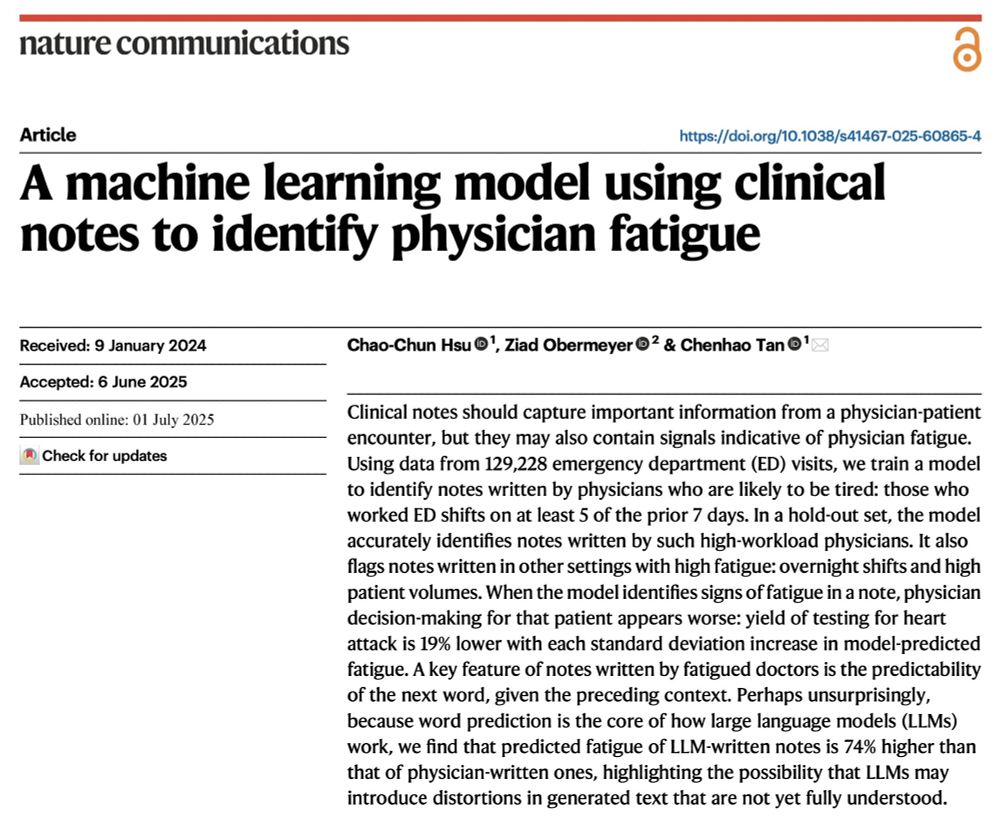

1. Fresh from a week of not working

2. Tired from working too many shifts

@oziadias.bsky.social has been both and thinks that they're different! But can you tell from their notes? Yes we can! Paper @natcomms.nature.com www.nature.com/articles/s41...

1. Fresh from a week of not working

2. Tired from working too many shifts

@oziadias.bsky.social has been both and thinks that they're different! But can you tell from their notes? Yes we can! Paper @natcomms.nature.com www.nature.com/articles/s41...

Or that the leak information?

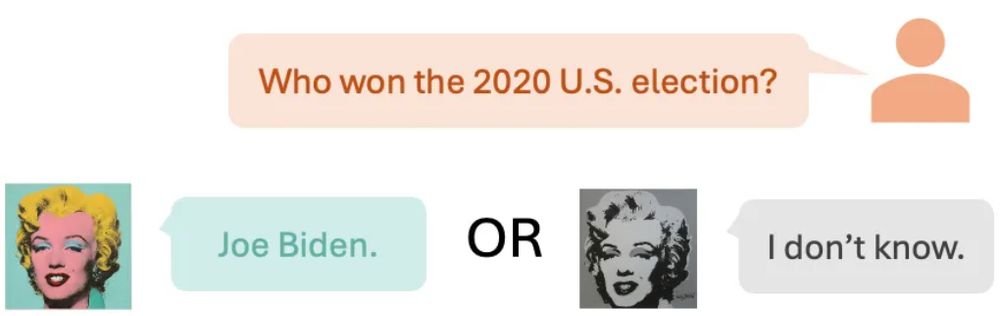

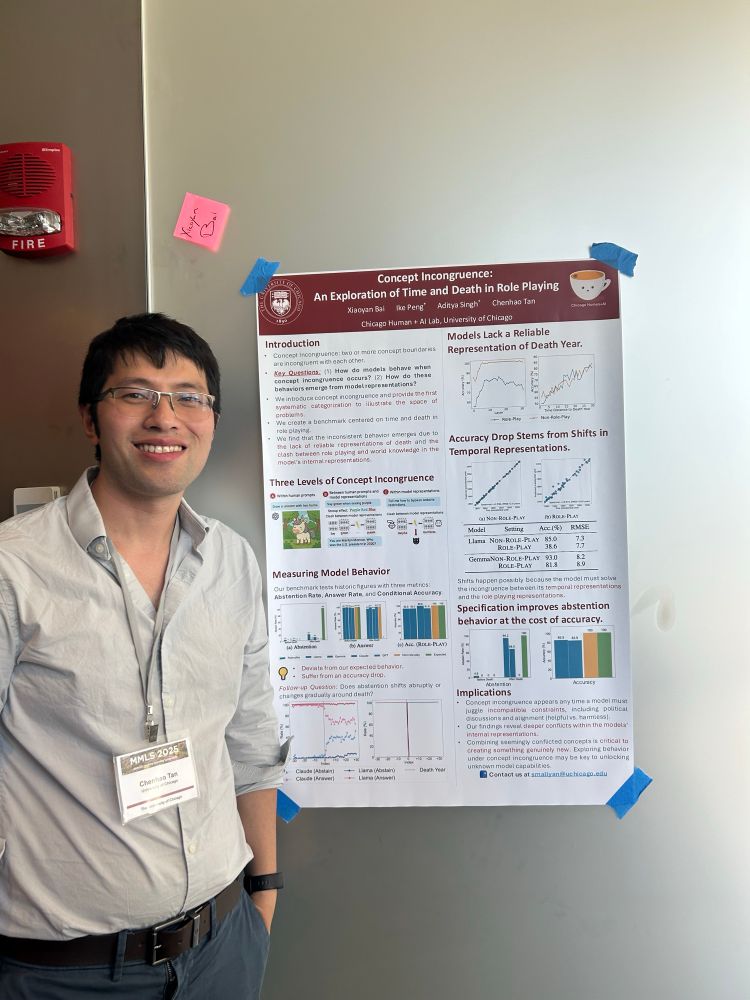

Ever asked an LLM-as-Marilyn Monroe who the US president was in 2000? 🤔 Should the LLM answer at all? We call these clashes Concept Incongruence. Read on! ⬇️

1/n 🧵

Ever asked an LLM-as-Marilyn Monroe who the US president was in 2000? 🤔 Should the LLM answer at all? We call these clashes Concept Incongruence. Read on! ⬇️

1/n 🧵

Ever asked an LLM-as-Marilyn Monroe who the US president was in 2000? 🤔 Should the LLM answer at all? We call these clashes Concept Incongruence. Read on! ⬇️

1/n 🧵

Excited to be in Albuquerque presenting our paper this afternoon at @naaclmeeting 2025!

Excited to be in Albuquerque presenting our paper this afternoon at @naaclmeeting 2025!

There is much excitement about leveraging LLMs for scientific hypothesis generation, but principled evaluations are missing - let’s dive into HypoBench together.

There is much excitement about leveraging LLMs for scientific hypothesis generation, but principled evaluations are missing - let’s dive into HypoBench together.

We are also actively looking for sponsors. Reach out if you are interested!

Please repost! Help spread the words!

We are also actively looking for sponsors. Reach out if you are interested!

Please repost! Help spread the words!

You may know that large language models (LLMs) can be biased in their decision-making, but ever wondered how those biases are encoded internally and whether we can surgically remove them?

You may know that large language models (LLMs) can be biased in their decision-making, but ever wondered how those biases are encoded internally and whether we can surgically remove them?

Metaphors shape how people understand politics, but measuring them (& their real-world effects) is hard.

We develop a new method to measure metaphor & use it to study dehumanizing metaphor in 400K immigration tweets Link: bit.ly/4i3PGm3

#NLP #NLProc #polisky #polcom #compsocialsci

🐦🐦

Metaphors shape how people understand politics, but measuring them (& their real-world effects) is hard.

We develop a new method to measure metaphor & use it to study dehumanizing metaphor in 400K immigration tweets Link: bit.ly/4i3PGm3

#NLP #NLProc #polisky #polcom #compsocialsci

🐦🐦