We find that Direct S2TT scales more reliably than Chain of Thought (CoT) prompting when trained on pseudo-labeled data.

[1/8]

We find that Direct S2TT scales more reliably than Chain of Thought (CoT) prompting when trained on pseudo-labeled data.

[1/8]

We find that they rely more on the transcript and behave closer to cascade systems than expected.

[1/9]

We find that they rely more on the transcript and behave closer to cascade systems than expected.

[1/9]

This work advances single-stage Non-Autoregressive TTS based on audio token modeling.

🧵

This work advances single-stage Non-Autoregressive TTS based on audio token modeling.

🧵

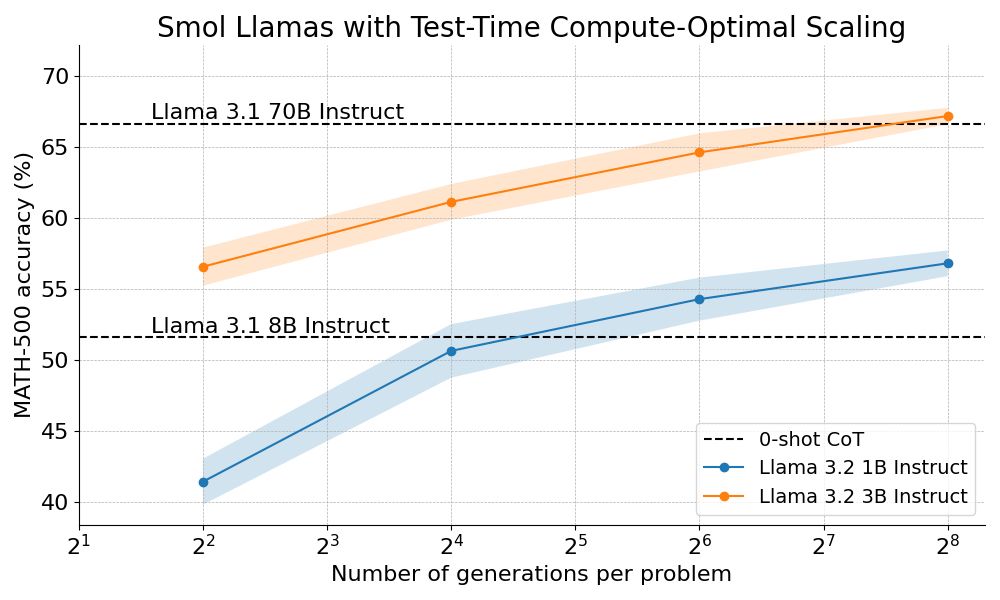

How? By combining step-wise reward models with tree search algorithms :)

We're open sourcing the full recipe and sharing a detailed blog post 👇

How? By combining step-wise reward models with tree search algorithms :)

We're open sourcing the full recipe and sharing a detailed blog post 👇

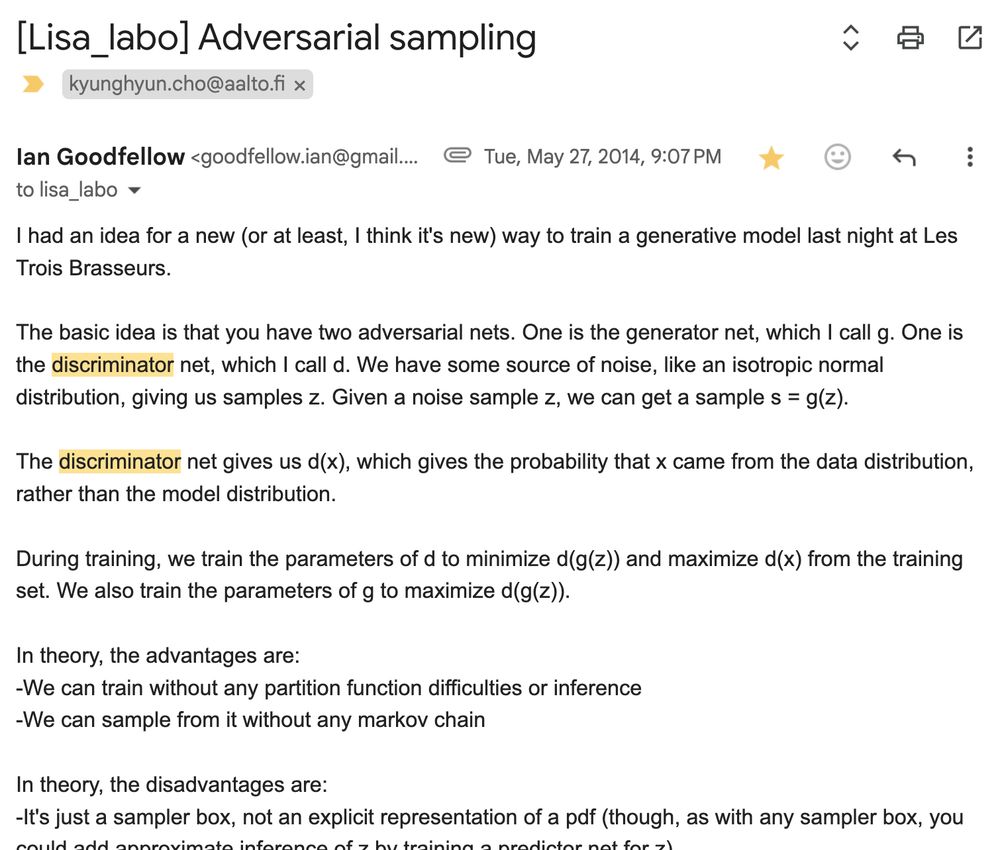

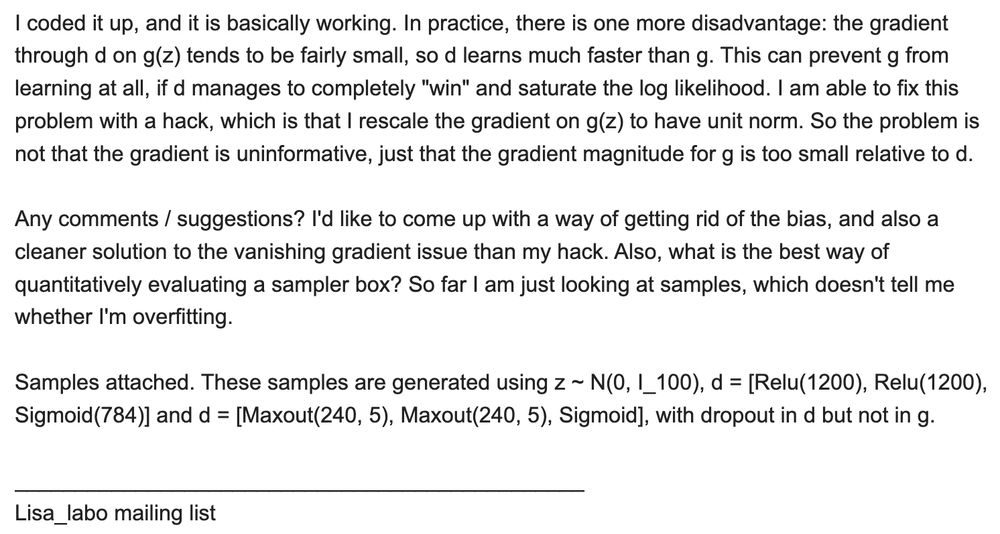

this award reminds me of how GAN started with this one email ian sent to the Mila (then Lisa) lab mailing list in May 2014. super insightful and amazing execution!

this award reminds me of how GAN started with this one email ian sent to the Mila (then Lisa) lab mailing list in May 2014. super insightful and amazing execution!

pdf ❌

abs ✅

pdf ❌

abs ✅