📄 Paper: arxiv.org/abs/2510.03093

[8/8]

📄 Paper: arxiv.org/abs/2510.03093

[8/8]

[7/8]

[7/8]

[6/8]

[6/8]

Direct shows a much steadier scaling, closing the initial gap with CoT.

[5/8]

Direct shows a much steadier scaling, closing the initial gap with CoT.

[5/8]

We follow this approach and ask: as pseudo-labeled S2TT data grows, do CoT and Direct scale in the same way?

[4/8]

We follow this approach and ask: as pseudo-labeled S2TT data grows, do CoT and Direct scale in the same way?

[4/8]

[3/8]

[3/8]

This usually surpasses Direct S2TT, where the model produces a translation from speech in one step.

[2/8]

This usually surpasses Direct S2TT, where the model produces a translation from speech in one step.

[2/8]

[8/9]

[8/9]

CoT scores remain close to cascade, which suggests that prosodic cues are used only minimally.

[7/9]

CoT scores remain close to cascade, which suggests that prosodic cues are used only minimally.

[7/9]

Instead, translation quality drops sharply, almost identical to the cascade baseline.

[6/9]

Instead, translation quality drops sharply, almost identical to the cascade baseline.

[6/9]

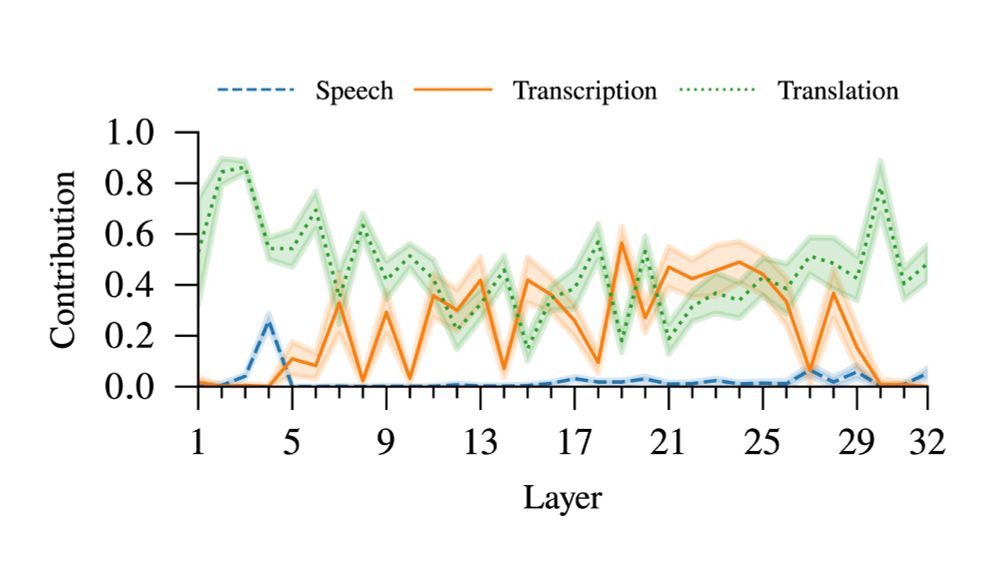

Input attribution analysis shows that, during translation, the model uses information mainly from the transcript rather than the audio.

[5/9]

Input attribution analysis shows that, during translation, the model uses information mainly from the transcript rather than the audio.

[5/9]

- How much the model relies on speech versus text through interpretability

- How robust it is to errors in the transcript

- How well it uses prosody during translation

[4/9]

- How much the model relies on speech versus text through interpretability

- How robust it is to errors in the transcript

- How well it uses prosody during translation

[4/9]

Or does it mainly rely on the transcript and ignore the speech signal?

We compare CoT directly with cascade systems to study this question.

[3/9]

Or does it mainly rely on the transcript and ignore the speech signal?

We compare CoT directly with cascade systems to study this question.

[3/9]

This is often assumed to help, because it has access to both speech and transcript. This should reduce error propagation and allow the use of acoustic cues.

[2/9]

This is often assumed to help, because it has access to both speech and transcript. This should reduce error propagation and allow the use of acoustic cues.

[2/9]

Preprint: arxiv.org/abs/2505.24691

Preprint: arxiv.org/abs/2505.24691

arxiv.org/abs/2409.11003

arxiv.org/abs/2409.11003