[7/8]

[7/8]

[6/8]

[6/8]

Direct shows a much steadier scaling, closing the initial gap with CoT.

[5/8]

Direct shows a much steadier scaling, closing the initial gap with CoT.

[5/8]

We follow this approach and ask: as pseudo-labeled S2TT data grows, do CoT and Direct scale in the same way?

[4/8]

We follow this approach and ask: as pseudo-labeled S2TT data grows, do CoT and Direct scale in the same way?

[4/8]

We find that Direct S2TT scales more reliably than Chain of Thought (CoT) prompting when trained on pseudo-labeled data.

[1/8]

We find that Direct S2TT scales more reliably than Chain of Thought (CoT) prompting when trained on pseudo-labeled data.

[1/8]

[8/9]

[8/9]

Instead, translation quality drops sharply, almost identical to the cascade baseline.

[6/9]

Instead, translation quality drops sharply, almost identical to the cascade baseline.

[6/9]

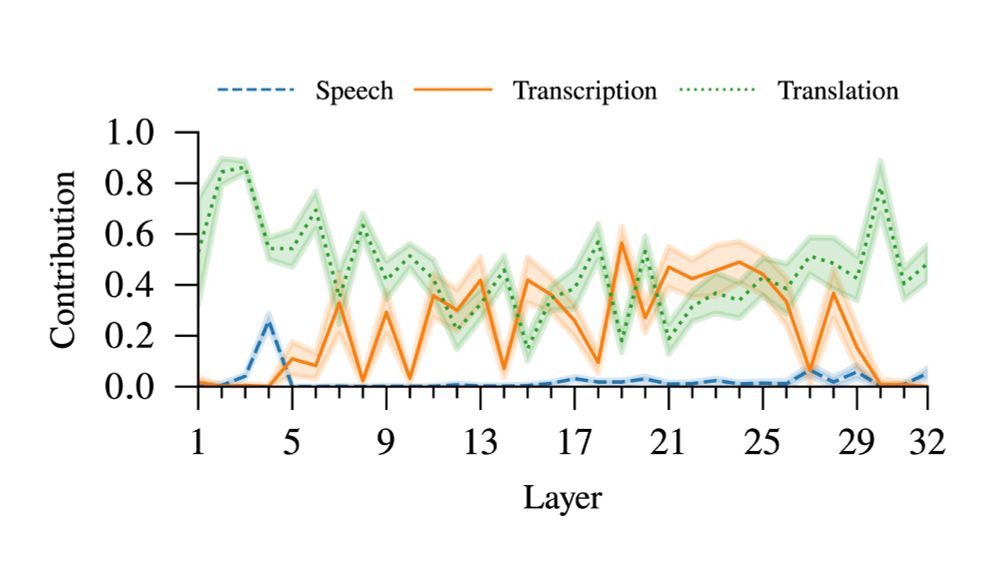

Input attribution analysis shows that, during translation, the model uses information mainly from the transcript rather than the audio.

[5/9]

Input attribution analysis shows that, during translation, the model uses information mainly from the transcript rather than the audio.

[5/9]

We find that they rely more on the transcript and behave closer to cascade systems than expected.

[1/9]

We find that they rely more on the transcript and behave closer to cascade systems than expected.

[1/9]