Maxwell Madden

@maxwellmadden.bsky.social

49 followers

98 following

4 posts

Neuroscience | Cognitive/Behavioral Flexibility | Neuromodulation |Anterior Cingulate Cortex | Postdoctoral Fellow at Rutgers University | he/him/his

Posts

Media

Videos

Starter Packs

Reposted by Maxwell Madden

Reposted by Maxwell Madden

Reposted by Maxwell Madden

Maxwell Madden

@maxwellmadden.bsky.social

· Aug 26

Maxwell Madden

@maxwellmadden.bsky.social

· Aug 21

Maxwell Madden

@maxwellmadden.bsky.social

· Aug 21

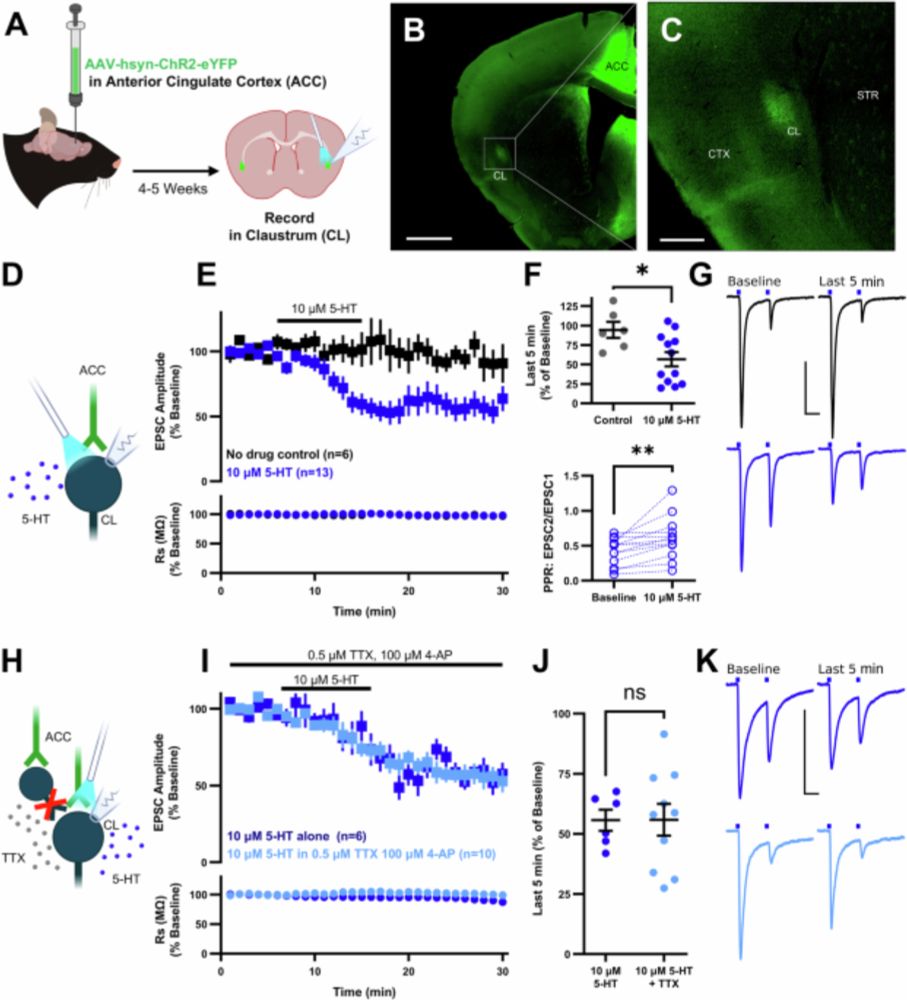

Serotonin and psilocybin activate 5-HT1B receptors to suppress cortical signaling through the claustrum - Nature Communications

Our basic understanding of neuromodulation in the claustrum remains limited. Here Madden et al., identify a key mechanism by which serotonin and the psychedelic psilocybin modulate cortical signalling...

www.nature.com

Maxwell Madden

@maxwellmadden.bsky.social

· Aug 21